HOSL: Hybrid-Order Split Learning for Memory-Constrained Edge Training

作者: Aakriti Lnu, Zhe Li, Dandan Liang, Chao Huang, Rui Li, Haibo Yang

分类: cs.LG

发布日期: 2026-04-07

💡 一句话要点

提出HOSL以解决边缘设备训练中的内存限制问题

🎯 匹配领域: 支柱九:具身大模型 (Embodied Foundation Models)

关键词: 分割学习 边缘计算 零阶优化 一阶优化 内存效率 模型训练 大规模语言模型

📋 核心要点

- 现有的分割学习方法主要依赖一阶优化,导致客户端内存开销大,影响模型训练效率。

- HOSL框架通过在客户端使用零阶优化,结合服务器端的一阶优化,平衡了内存效率与优化效果。

- 实验表明,HOSL在多个任务上减少了客户端GPU内存使用,并在准确性上优于零阶基线,验证了其有效性。

📝 摘要(中文)

分割学习(SL)通过在资源受限的边缘设备与计算能力强大的服务器之间分配模型计算,实现大型语言模型的协同训练。然而,现有的SL系统主要依赖一阶(FO)优化,导致客户端需要存储中间量(如激活值),造成显著的内存开销。本文提出了一种新颖的混合阶分割学习框架HOSL,结合了客户端的零阶(ZO)优化与服务器端的FO优化,解决了内存效率与优化效果之间的权衡。HOSL通过在客户端采用内存高效的ZO梯度估计,消除了反向传播和激活存储,显著降低了客户端内存消耗。同时,服务器端的FO优化确保了快速收敛和竞争力的性能。实验结果表明,HOSL在多个任务上显著降低了客户端GPU内存,并在准确性上与FO方法保持接近。

🔬 方法详解

问题定义:本文旨在解决现有分割学习方法中客户端内存开销过大的问题,尤其是在反向传播过程中需要存储大量中间激活值的痛点。

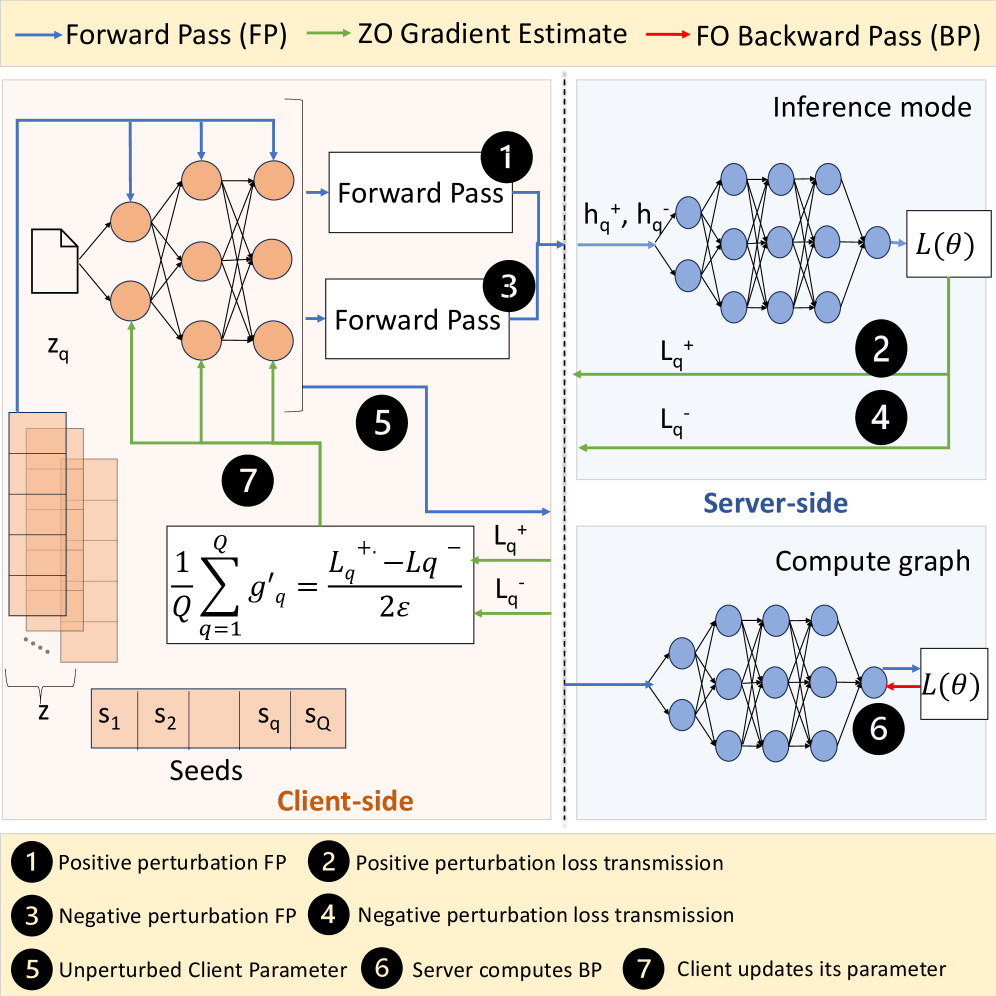

核心思路:HOSL通过在客户端采用零阶优化,消除反向传播和激活存储,从而降低内存消耗,同时在服务器端继续使用一阶优化以确保快速收敛。

技术框架:HOSL的整体架构包括客户端和服务器两个主要模块。客户端负责执行零阶优化以进行梯度估计,而服务器则进行一阶优化以更新模型参数。

关键创新:HOSL的核心创新在于将零阶和一阶优化结合,利用客户端的内存效率优势和服务器的计算能力,显著提升了训练过程的效率和效果。

关键设计:在设计中,HOSL采用了内存高效的梯度估计方法,优化了客户端的内存使用,同时确保服务器端的优化算法能够快速收敛,具体的参数设置和损失函数设计未在摘要中详细说明,待进一步研究。

🖼️ 关键图片

📊 实验亮点

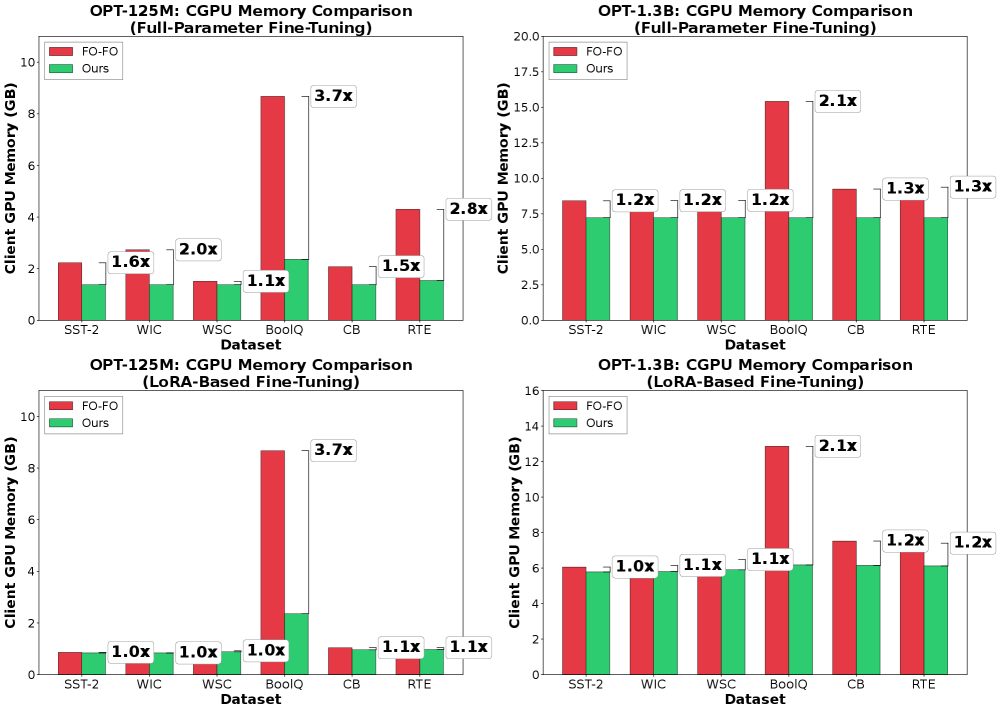

实验结果显示,HOSL在多个任务上将客户端GPU内存使用减少了最多3.7倍,同时在准确性上与一阶优化方法的基线相差仅0.20%-4.23%。此外,HOSL在性能上超越了零阶基线,提升幅度达到15.55%,验证了混合策略的有效性。

🎯 应用场景

HOSL框架适用于需要在边缘设备上进行大规模模型训练的场景,如智能手机、物联网设备等。这种方法能够有效降低内存需求,使得在资源受限的环境中也能进行高效的模型训练,具有广泛的实际应用价值。未来,HOSL可能推动更多边缘计算应用的发展,尤其是在实时数据处理和智能决策领域。

📄 摘要(原文)

Split learning (SL) enables collaborative training of large language models (LLMs) between resource-constrained edge devices and compute-rich servers by partitioning model computation across the network boundary. However, existing SL systems predominantly rely on first-order (FO) optimization, which requires clients to store intermediate quantities such as activations for backpropagation. This results in substantial memory overhead, largely negating benefits of model partitioning. In contrast, zeroth-order (ZO) optimization eliminates backpropagation and significantly reduces memory usage, but often suffers from slow convergence and degraded performance. In this work, we propose HOSL, a novel Hybrid-Order Split Learning framework that addresses this fundamental trade-off between memory efficiency and optimization effectiveness by strategically integrating ZO optimization on the client side with FO optimization on the server side. By employing memory-efficient ZO gradient estimation at the client, HOSL eliminates backpropagation and activation storage, reducing client memory consumption. Meanwhile, server-side FO optimization ensures fast convergence and competitive performance. Theoretically, we show that HOSL achieves an $\mathcal{O}(\sqrt{d_c/TQ})$ rate, which depends on client-side model dimension $d_c$ rather than the full model dimension $d$, demonstrating that convergence improves as more computation is offloaded to the server. Extensive experiments on OPT models (125M and 1.3B parameters) across 6 tasks demonstrate that HOSL reduces client GPU memory by up to 3.7$\times$ compared to the FO method while achieving accuracy within 0.20%-4.23% of this baseline. Furthermore, HOSL outperforms the ZO baseline by up to 15.55%, validating the effectiveness of our hybrid strategy for memory-efficient training on edge devices.