HBVLA: Pushing 1-Bit Post-Training Quantization for Vision-Language-Action Models

作者: Xin Yan, Zhenglin Wan, Feiyang Ye, Xingrui Yu, Hangyu Du, Yang You, Ivor Tsang

分类: cs.LG

发布日期: 2026-02-14

💡 一句话要点

提出HBVLA以解决视觉-语言-动作模型的量化问题

🎯 匹配领域: 支柱九:具身大模型 (Embodied Foundation Models)

关键词: 视觉-语言-动作 1位量化 政策感知 稀疏正交变换 机器人控制 边缘计算 模型压缩

📋 核心要点

- 现有的1位量化方法未能有效缩小量化权重与全精度权重之间的分布差距,导致量化误差在长时间执行中累积。

- 本文提出HBVLA框架,通过政策感知增强Hessian识别关键权重,并对非显著权重进行稀疏正交变换,最终实现高效的1位量化。

- 在LIBERO和SimplerEnv上,HBVLA分别保留了92.2%和93.6%的全精度性能,显著优于现有的二值化方法,且在实际应用中表现出色。

📝 摘要(中文)

视觉-语言-动作(VLA)模型能够实现指令跟随的具身控制,但其庞大的计算和内存需求限制了在资源受限的机器人和边缘平台上的部署。现有的1位量化方法未能有效缩小量化权重与全精度权重之间的分布差距,导致在长时间闭环执行中量化误差累积,严重影响动作表现。为此,本文提出了HBVLA,一个针对VLA的量化框架,通过政策感知增强Hessian识别关键权重,并采用稀疏正交变换处理非显著权重,最后在Harr域中进行1位量化。实验结果表明,HBVLA在多个VLA上表现优异,显著超越现有方法。

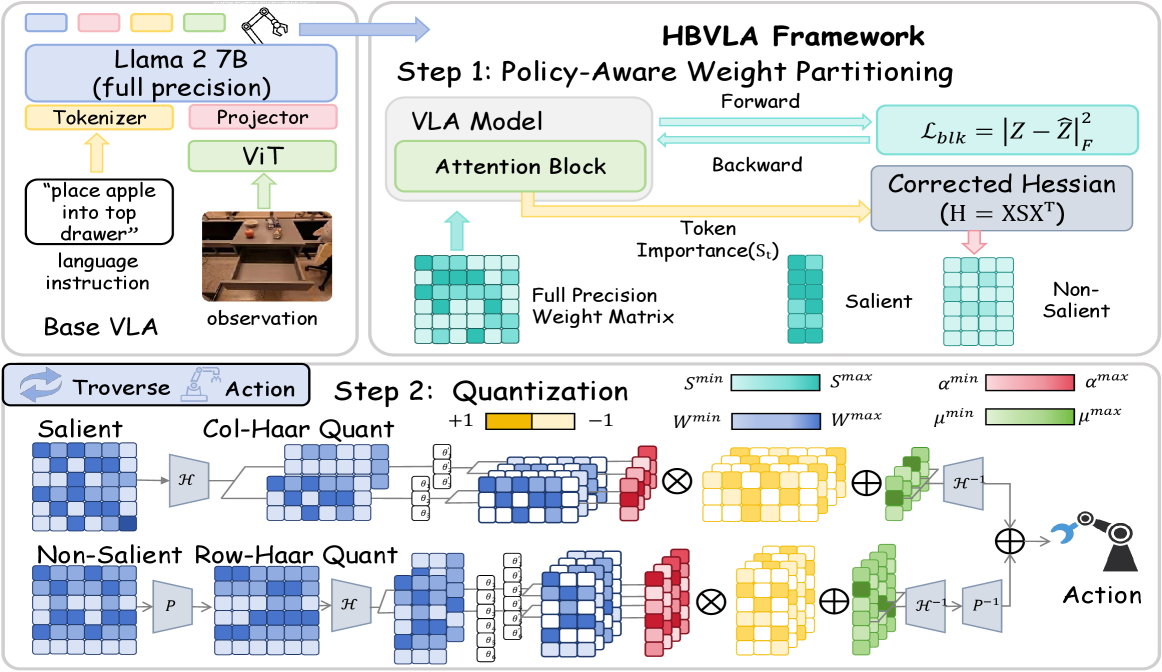

🔬 方法详解

问题定义:本文旨在解决视觉-语言-动作模型在量化过程中面临的性能下降问题。现有方法在将权重量化为1位时,未能有效缩小量化权重与全精度权重之间的分布差距,导致在长时间闭环执行中量化误差累积,影响模型的动作表现。

核心思路:HBVLA框架的核心思路是通过政策感知增强Hessian来识别对动作生成至关重要的权重,并对非显著权重进行稀疏正交变换,以诱导低熵的中间状态,从而提高量化的有效性。

技术框架:HBVLA的整体架构包括三个主要模块:首先,使用政策感知增强Hessian识别关键权重;其次,对非显著权重进行稀疏正交变换;最后,在Harr域中进行分组的1位量化。

关键创新:HBVLA的关键创新在于结合政策感知增强Hessian与稀疏正交变换,能够有效识别和处理关键与非关键权重,从而减少量化误差,提升模型在资源受限环境中的表现。

关键设计:在设计中,采用了政策感知增强Hessian来评估权重的重要性,并通过稀疏正交变换降低非显著权重的熵,最终在Harr域中进行1位量化,确保量化后的模型性能接近全精度模型。

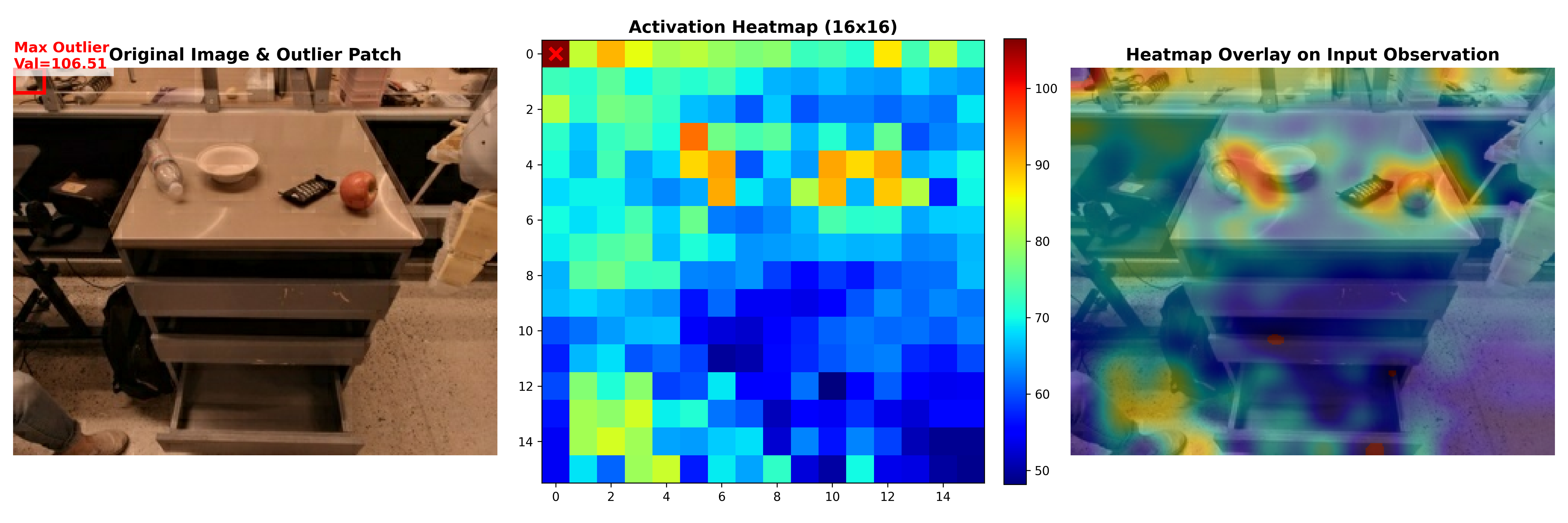

🖼️ 关键图片

📊 实验亮点

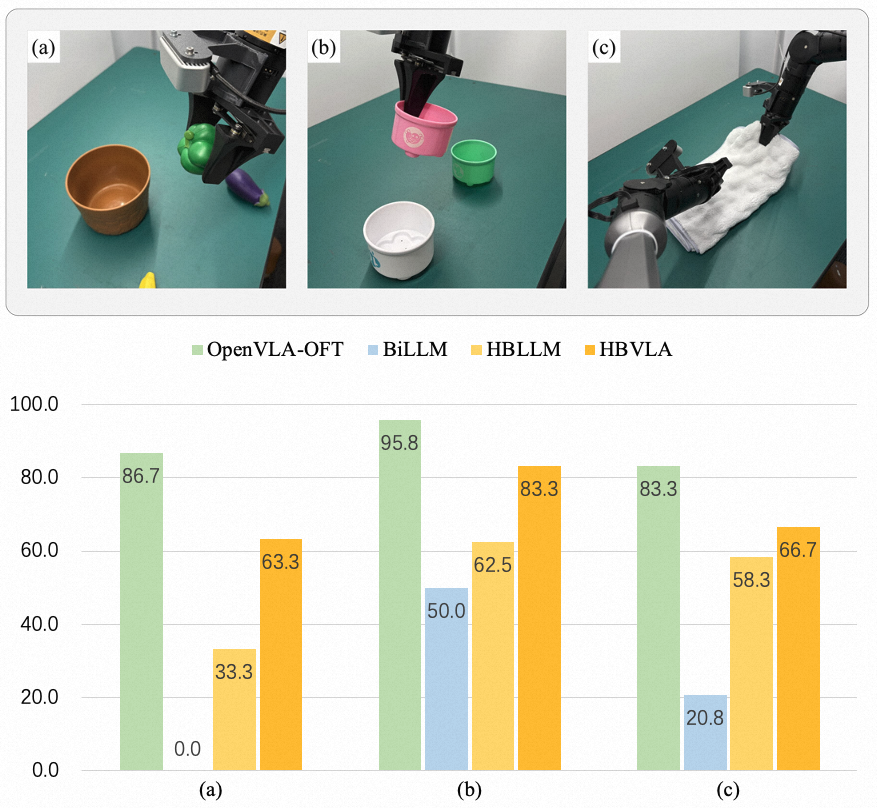

HBVLA在LIBERO和SimplerEnv环境中的实验结果显示,量化后的OpenVLA-OFT和CogAct分别保留了92.2%和93.6%的全精度性能,显著优于现有的二值化方法,且在实际评估中仅有微小的成功率下降,证明了其在紧凑硬件条件下的强大可部署性。

🎯 应用场景

HBVLA的研究成果在资源受限的机器人和边缘计算平台上具有广泛的应用潜力。通过实现超低位量化,该框架能够使视觉-语言-动作模型在硬件限制下更可靠地部署,推动智能机器人在实际环境中的应用,尤其是在需要快速响应和高效处理的场景中。

📄 摘要(原文)

Vision-Language-Action (VLA) models enable instruction-following embodied control, but their large compute and memory footprints hinder deployment on resource-constrained robots and edge platforms. While reducing weights to 1-bit precision through binarization can greatly improve efficiency, existing methods fail to narrow the distribution gap between binarized and full-precision weights, causing quantization errors to accumulate under long-horizon closed-loop execution and severely degrade actions. To fill this gap, we propose HBVLA, a VLA-tailored binarization framework. First, we use a policy-aware enhanced Hessian to identify weights that are truly critical for action generation. Then, we employ a sparse orthogonal transform for non-salient weights to induce a low-entropy intermediate state. Finally, we quantize both salient and non-salient weights in the Harr domain with group-wise 1-bit quantization. We have evaluated our approach on different VLAs: on LIBERO, quantized OpenVLA-OFT retains 92.2% of full-precision performance; on SimplerEnv, quantized CogAct retains 93.6%, significantly outperforming state-of-the-art binarization methods. We further validate our method on real-world evaluation suite and the results show that HBVLA incurs only marginal success-rate degradation compared to the full-precision model, demonstrating robust deployability under tight hardware constraints. Our work provides a practical foundation for ultra-low-bit quantization of VLAs, enabling more reliable deployment on hardware-limited robotic platforms.