Cog-Rethinker: Hierarchical Metacognitive Reinforcement Learning for LLM Reasoning

作者: Zexu Sun, Yongcheng Zeng, Erxue Min, Heyang Gao, Bokai Ji, Xu Chen

分类: cs.LG, cs.AI

发布日期: 2025-10-13

备注: 22 Pages, 8 figures, 4 tables

💡 一句话要点

提出Cog-Rethinker以解决LLM推理中的样本利用效率问题

🎯 匹配领域: 支柱二:RL算法与架构 (RL & Architecture) 支柱九:具身大模型 (Embodied Foundation Models)

关键词: 大型语言模型 强化学习 推理能力 元认知 样本利用效率 数学推理 模型优化

📋 核心要点

- 现有方法在激活LLMs推理能力时,依赖固定提示模板,导致样本利用效率低下,尤其在处理零准确率问题时。

- Cog-Rethinker通过分层元认知框架优化RL训练过程,首先将复杂问题分解为子问题,然后基于历史错误进行答案精炼。

- 实验结果显示,Cog-Rethinker在数学推理基准测试中表现优异,样本效率显著提升,相较于基线方法加速了收敛。

📝 摘要(中文)

近年来,大型语言模型(LLMs)通过可验证奖励的强化学习(RL)展现出显著的推理能力。然而,现有方法依赖固定提示模板激活LLMs的内在能力,导致样本利用效率低下。为此,本文提出了Cog-Rethinker,一个新颖的分层元认知强化学习框架,旨在优化LLM推理过程。Cog-Rethinker通过两阶段的分层元认知框架,首先将零准确率问题分解为子问题,然后基于之前错误的解决方案进一步精炼答案。实验结果表明,Cog-Rethinker在多种数学推理基准测试中表现优越,并显著提高了样本效率,加速了收敛速度。

🔬 方法详解

问题定义:本文旨在解决现有LLM推理方法中样本利用效率低下的问题,尤其是在处理零准确率问题时,固定提示模板导致大量无效输出。

核心思路:Cog-Rethinker的核心思想是通过分层元认知框架优化RL训练过程,借鉴人类认知方式,将复杂问题分解为更小的子问题,并在此基础上进行答案的精炼。

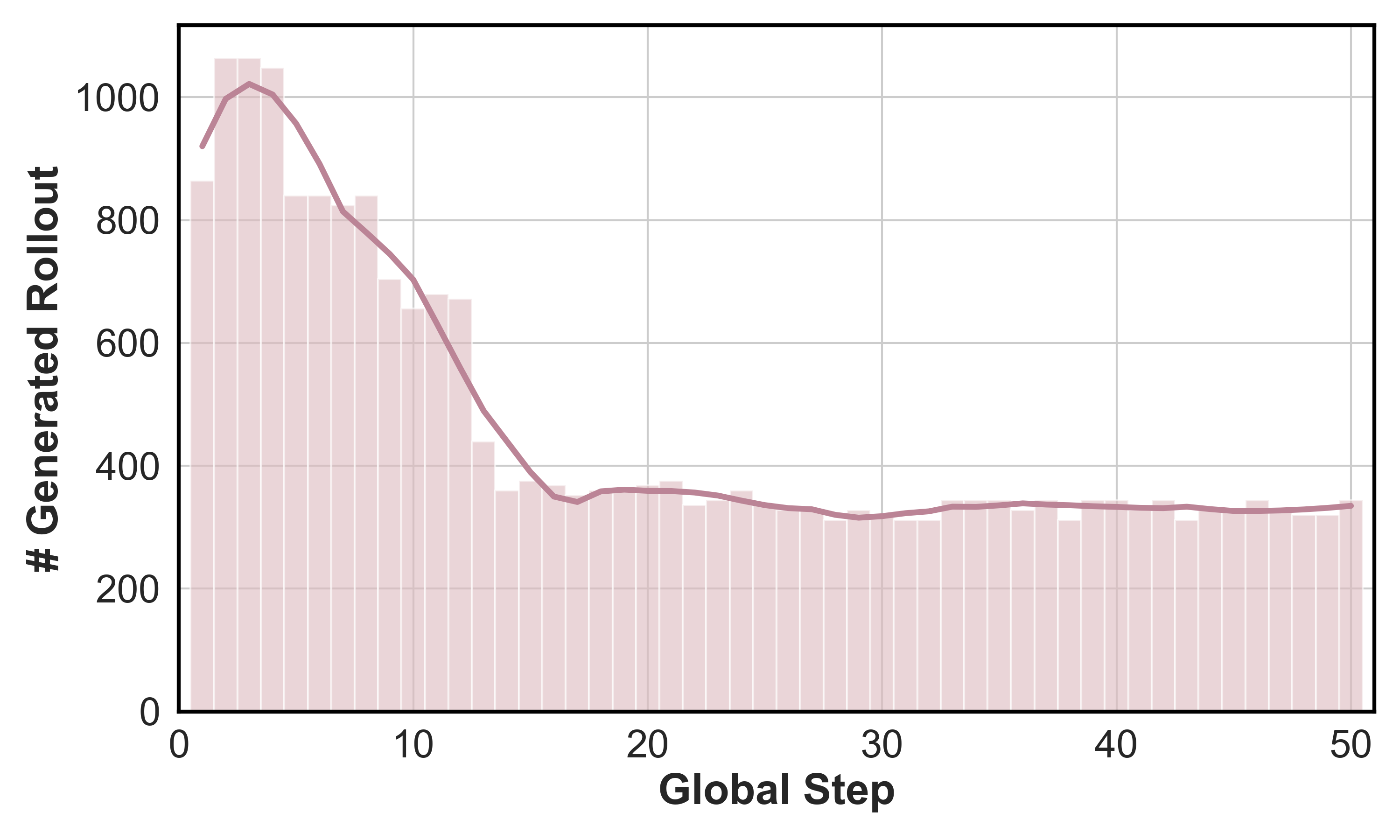

技术框架:Cog-Rethinker的整体架构包括两个主要阶段:第一阶段是直接的回滚过程,第二阶段则是基于第一阶段结果的答案精炼。通过这种分层结构,模型能够更有效地利用样本。

关键创新:Cog-Rethinker的创新在于其分层元认知的设计,使得模型能够在推理过程中动态调整策略,显著提高了样本利用率和推理准确性。与现有方法相比,Cog-Rethinker能够更好地处理复杂问题。

关键设计:在模型设计中,采用了监督微调策略,使用正确样本对策略进行训练,以保持训练和测试的一致性。此外,模型在处理零准确率问题时,利用历史错误的解决方案进行答案的进一步优化。

🖼️ 关键图片

📊 实验亮点

实验结果表明,Cog-Rethinker在多种数学推理基准测试中表现优越,相较于基线方法,样本效率提升显著,收敛速度加快,具体性能数据未详述,但整体效果明显优于传统方法。

🎯 应用场景

Cog-Rethinker的研究成果在多个领域具有广泛的应用潜力,尤其是在需要高效推理的任务中,如教育、自动问答系统和智能助手等。通过提高样本利用效率,该框架能够加速模型的训练过程,提升推理能力,具有重要的实际价值和未来影响。

📄 摘要(原文)

Contemporary progress in large language models (LLMs) has revealed notable inferential capacities via reinforcement learning (RL) employing verifiable reward, facilitating the development of O1 and R1-like reasoning models. Directly training from base models with RL is called zero-RL. However, previous works rely upon activating LLMs' inherent capacities through fixed prompt templates. This strategy introduces substantial sampling inefficiencies for weak LLMs, as the majority of problems generate invalid outputs during accuracy-driven filtration in reasoning tasks, which causes a waste of samples. To solve this issue, we propose Cog-Rethinker, a novel hierarchical metacognitive RL framework for LLM reasoning. Our Cog-Rethinker mainly focuses on the rollout procedure in RL training. After the direct rollout, our Cog-Rethinker improves sample utilization in a hierarchical metacognitive two-stage framework. By leveraging human cognition during solving problems, firstly, it prompts policy to decompose zero-accuracy problems into subproblems to produce final reasoning results. Secondly, with zero-accuracy problems in previous rollout stage, it further prompts policy to refine these answers by referencing previous wrong solutions. Moreover, to enable cold-start of the two new reasoning patterns and maintain train-test consistency across prompt templates, our Cog-Rethinker applies supervised fine-tuning on the policy using correct samples of the two stages with direct rollout template. Experimental results demonstrate Cog-Rethinker's superior performance on various mathematical reasoning benchmarks, we also analyzed its improved sample efficiency that accelerates convergence compared to baseline methods.