Weighted Conditional Flow Matching

作者: Sergio Calvo-Ordonez, Matthieu Meunier, Alvaro Cartea, Christoph Reisinger, Yarin Gal, Jose Miguel Hernandez-Lobato

分类: cs.LG

发布日期: 2025-07-29 (更新: 2026-01-01)

备注: Working paper. Changes to generalize the framework

💡 一句话要点

提出加权条件流匹配以提升生成效率与准确性

🎯 匹配领域: 支柱二:RL算法与架构 (RL & Architecture)

关键词: 条件流匹配 生成模型 最优传输 熵最优传输 样本加权 计算效率 深度学习

📋 核心要点

- 现有的条件流匹配方法在生成路径上偏离直线插值,导致生成速度慢且准确性低。

- 本文提出的加权条件流匹配(W-CFM)通过加权训练样本,改进了传统CFM损失,提升了生成性能。

- 实验结果表明,W-CFM在样本质量和多样性上优于其他基线,同时保持了计算效率。

📝 摘要(中文)

条件流匹配(CFM)作为训练连续归一化流的有效框架,存在生成路径偏离直线插值的问题,导致生成速度慢且准确性低。为此,本文提出加权条件流匹配(W-CFM),通过对每对训练样本加权,恢复了熵最优传输的耦合。我们证明了在大批量极限下,W-CFM与小批量最优传输方法等价,克服了与批量大小相关的计算和性能瓶颈。实验证明,W-CFM在多个合成和真实数据集上的无条件生成任务中,样本质量、保真度和多样性均优于其他基线,同时保持了CFM的计算效率。

🔬 方法详解

问题定义:现有的条件流匹配(CFM)方法在生成过程中,路径往往偏离直线插值,导致生成速度慢且准确性不足,尤其在推理阶段需要细致的离散化处理。

核心思路:本文提出的加权条件流匹配(W-CFM)通过对每对训练样本$(x, y)$应用Gibbs核进行加权,旨在恢复熵最优传输(EOT)的耦合,从而提高生成路径的直线性和效率。

技术框架:W-CFM的整体架构包括对训练样本的加权处理、损失函数的修改以及与小批量最优传输方法的等价性分析。主要模块包括样本加权、损失计算和生成路径优化。

关键创新:W-CFM的核心创新在于通过加权机制有效恢复熵最优传输的耦合,解决了传统CFM在生成路径上的不直线性问题,且在大批量极限下与小批量最优传输方法等价。

关键设计:在W-CFM中,损失函数的设计考虑了Gibbs核的应用,确保了样本加权的有效性。同时,参数设置上针对不同数据集进行了优化,以确保生成质量和计算效率。

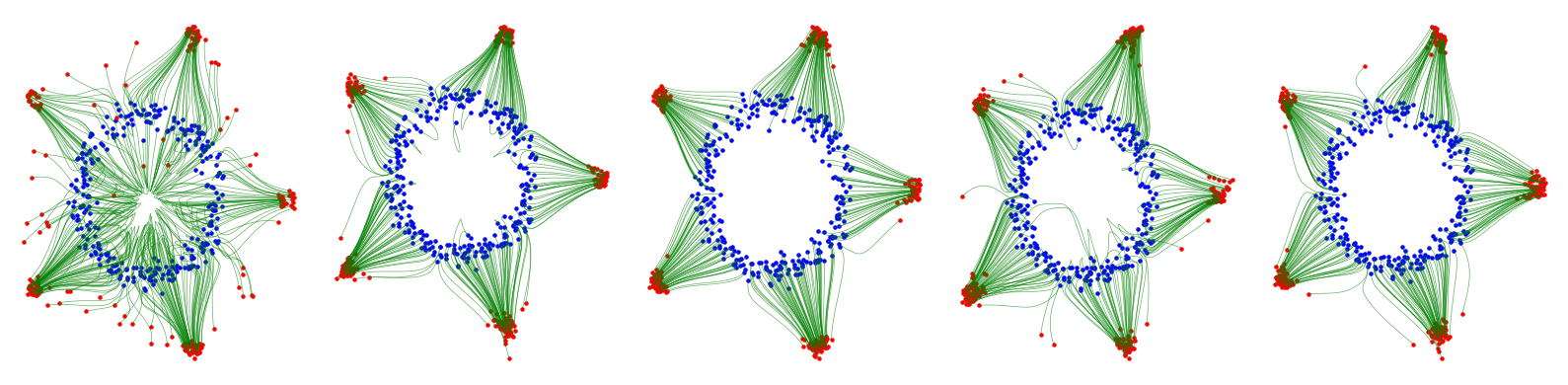

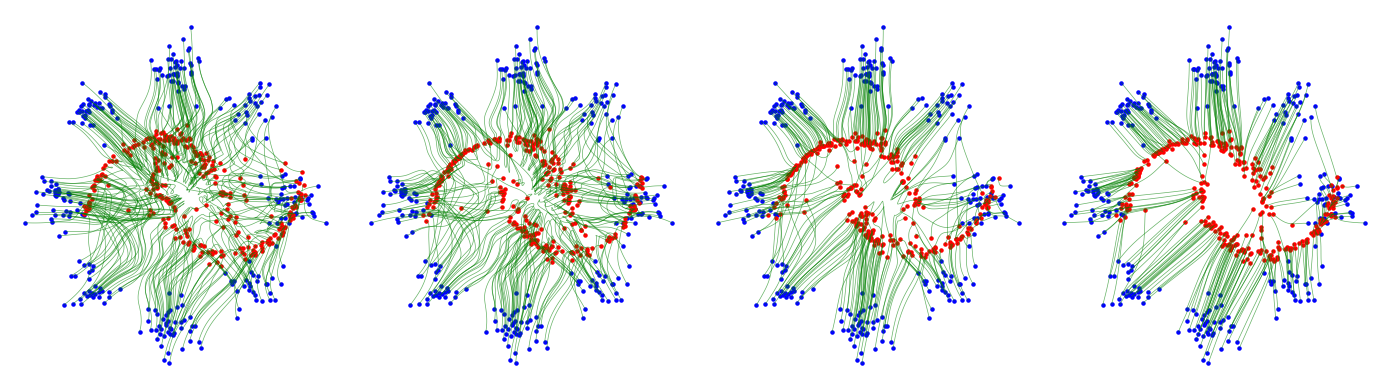

🖼️ 关键图片

📊 实验亮点

实验结果显示,W-CFM在多个合成和真实数据集上实现了与其他基线方法相当或更优的样本质量、保真度和多样性,且在计算效率上保持了与传统CFM相同的水平,展示了其在生成任务中的强大性能。

🎯 应用场景

该研究的潜在应用领域包括图像生成、数据增强和无监督学习等。通过提升生成模型的效率和准确性,W-CFM可以在实际应用中更好地满足实时生成和高质量样本需求,具有广泛的实际价值和未来影响。

📄 摘要(原文)

Conditional flow matching (CFM) has emerged as a powerful framework for training continuous normalizing flows due to its computational efficiency and effectiveness. However, standard CFM often produces paths that deviate significantly from straight-line interpolations between prior and target distributions, making generation slower and less accurate due to the need for fine discretization at inference. Recent methods enhance CFM performance by inducing shorter and straighter trajectories but typically rely on computationally expensive mini-batch optimal transport (OT). Drawing insights from entropic optimal transport (EOT), we propose Weighted Conditional Flow Matching (W-CFM), a novel approach that modifies the classical CFM loss by weighting each training pair $(x, y)$ with a Gibbs kernel. We show that this weighting recovers the entropic OT coupling up to some bias in the marginals, and we provide the conditions under which the marginals remain nearly unchanged. Moreover, we establish an equivalence between W-CFM and the minibatch OT method in the large-batch limit, showing how our method overcomes computational and performance bottlenecks linked to batch size. Empirically, we test our method on unconditional generation on various synthetic and real datasets, confirming that W-CFM achieves comparable or superior sample quality, fidelity, and diversity to other alternative baselines while maintaining the computational efficiency of vanilla CFM.