CoT Information: Improved Sample Complexity under Chain-of-Thought Supervision

作者: Awni Altabaa, Omar Montasser, John Lafferty

分类: stat.ML, cs.LG

发布日期: 2025-05-21

💡 一句话要点

提出CoT信息以提高链式思维监督下的样本复杂度

🎯 匹配领域: 支柱九:具身大模型 (Embodied Foundation Models)

关键词: 链式思维 多步推理 样本复杂度 统计学习 信息论

📋 核心要点

- 现有的标准监督学习方法在处理多步推理的复杂函数时面临显著挑战,难以有效学习。

- 本文提出了一种新的统计理论,明确了CoT风险与端到端风险之间的关系,从而提高样本复杂度界限。

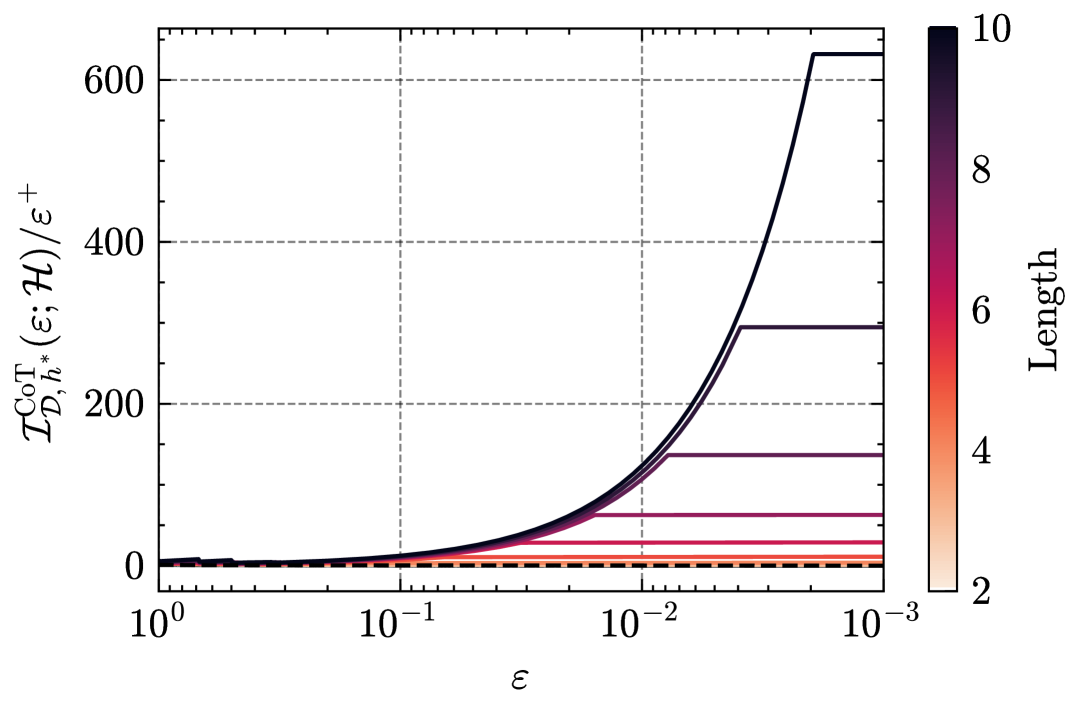

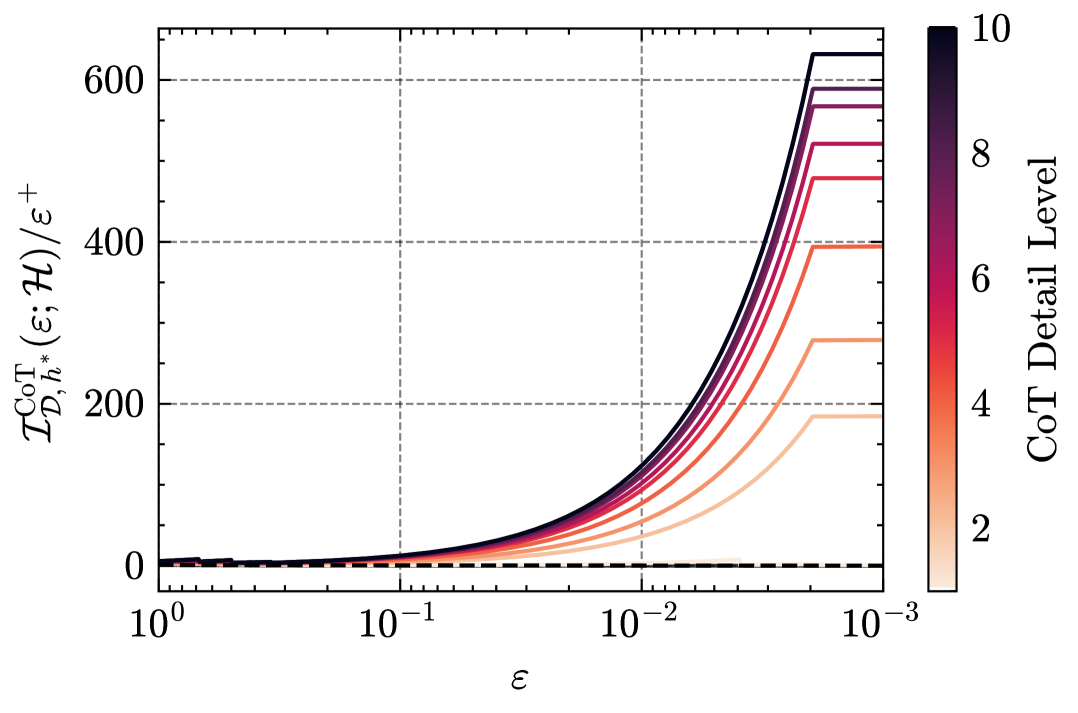

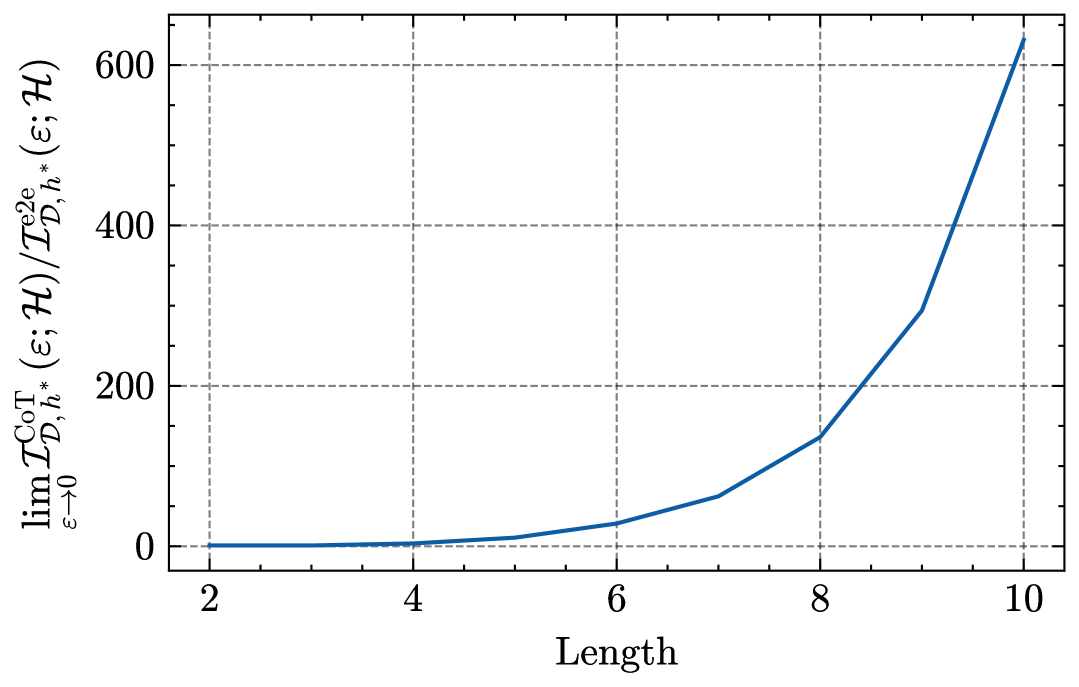

- 研究结果表明,CoT监督可以显著加快学习速率,样本复杂度的缩放速度优于传统方法。

📝 摘要(中文)

学习涉及多步推理的复杂函数对标准监督学习构成了重大挑战。链式思维(CoT)监督通过提供中间推理步骤与最终输出,成为增强大型语言模型推理能力的有效技术。本文发展了在CoT监督下的学习统计理论,明确将训练目标(CoT风险)与测试目标(端到端风险)联系起来,从而实现更精确的样本复杂度界限。通过引入CoT信息度量,量化观察推理过程所带来的额外判别能力,研究表明CoT监督相比标准端到端监督可以显著加快学习速率,样本复杂度的缩放速度更快,表明CoT信息是学习中的基本统计复杂度度量。

🔬 方法详解

问题定义:本文旨在解决在多步推理任务中,标准监督学习方法样本复杂度高的问题。现有方法往往无法有效利用推理过程中的中间步骤,导致学习效率低下。

核心思路:论文提出通过链式思维(CoT)监督来改善学习过程,明确训练和测试目标之间的差异,以提高样本复杂度的界限。通过引入CoT信息度量,量化推理过程的判别能力,从而加速学习。

技术框架:整体架构包括两个主要阶段:首先是通过CoT监督进行模型训练,其次是评估模型在端到端任务上的表现。关键模块包括CoT信息的计算和样本复杂度的分析。

关键创新:最重要的技术创新在于引入了CoT信息度量,明确了CoT风险与端到端风险之间的联系。这一创新使得样本复杂度的界限得以显著改善,区别于传统的d/ε速率。

关键设计:在模型训练中,采用特定的损失函数来优化CoT风险,并通过信息论方法推导出CoT信息的下界,确保模型在推理过程中的有效学习。

🖼️ 关键图片

📊 实验亮点

实验结果表明,使用CoT监督的模型在样本复杂度上表现出显著优势,达到目标端到端误差ε所需的样本量为传统方法的d/ε速率的显著降低,验证了CoT信息在学习中的重要性。

🎯 应用场景

该研究的潜在应用领域包括自然语言处理、智能问答系统和复杂决策支持系统等。通过提高多步推理任务的学习效率,能够在实际应用中实现更快速和准确的推理能力,推动智能系统的进一步发展。

📄 摘要(原文)

Learning complex functions that involve multi-step reasoning poses a significant challenge for standard supervised learning from input-output examples. Chain-of-thought (CoT) supervision, which provides intermediate reasoning steps together with the final output, has emerged as a powerful empirical technique, underpinning much of the recent progress in the reasoning capabilities of large language models. This paper develops a statistical theory of learning under CoT supervision. A key characteristic of the CoT setting, in contrast to standard supervision, is the mismatch between the training objective (CoT risk) and the test objective (end-to-end risk). A central part of our analysis, distinguished from prior work, is explicitly linking those two types of risk to achieve sharper sample complexity bounds. This is achieved via the CoT information measure $\mathcal{I}{\mathcal{D}, h\star}^{\mathrm{CoT}}(ε; \calH)$, which quantifies the additional discriminative power gained from observing the reasoning process. The main theoretical results demonstrate how CoT supervision can yield significantly faster learning rates compared to standard E2E supervision. Specifically, it is shown that the sample complexity required to achieve a target E2E error $ε$ scales as $d/\mathcal{I}{\mathcal{D}, h\star}^{\mathrm{CoT}}(ε; \calH)$, where $d$ is a measure of hypothesis class complexity, which can be much faster than standard $d/ε$ rates. Information-theoretic lower bounds in terms of the CoT information are also obtained. Together, these results suggest that CoT information is a fundamental measure of statistical complexity for learning under chain-of-thought supervision.