Adapt-Pruner: Adaptive Structural Pruning for Efficient Small Language Model Training

作者: Rui Pan, Shivanshu Shekhar, Boyao Wang, Shizhe Diao, Jipeng Zhang, Xingyuan Pan, Renjie Pi, Tong Zhang

分类: cs.LG, cs.AI, cs.CL

发布日期: 2025-02-05 (更新: 2025-11-14)

🔗 代码/项目: GITHUB

💡 一句话要点

提出Adapt-Pruner以解决小型语言模型训练效率问题

🎯 匹配领域: 支柱九:具身大模型 (Embodied Foundation Models)

关键词: 小型语言模型 自适应剪枝 模型训练 性能提升 边缘计算

📋 核心要点

- 现有方法在小型语言模型训练中面临高计算成本和性能下降的挑战。

- 论文提出的Adapt-Pruner通过层级自适应剪枝和增量剪枝有效提升了模型性能。

- 实验结果显示,Adapt-Pruner在准确率上比传统剪枝方法提高了1%-7%,并在多个基准测试中超越了LLaMA-3.2-1B模型。

📝 摘要(中文)

小型语言模型(SLMs)因其在边缘设备上的广泛应用而受到学术界和工业界的关注。传统方法要么从头开始预训练模型,导致计算成本高昂,要么压缩/剪枝现有的大型语言模型(LLMs),导致性能下降。本文研究了一系列加速方法,包括结构化剪枝和模型训练,提出了层级自适应剪枝(Adapt-Pruner),在LLMs中表现出显著的效果提升。通过进一步训练的自适应剪枝,模型性能可与从头预训练的模型相媲美。实验结果表明,Adapt-Pruner在多个基准测试中超越了传统剪枝方法,展现了其在小型语言模型训练中的潜力。

🔬 方法详解

问题定义:本文旨在解决小型语言模型训练中的高计算成本和性能下降问题。现有方法在压缩大型语言模型时,往往导致模型性能显著下降,无法与从头预训练的模型相媲美。

核心思路:论文提出的Adapt-Pruner通过层级自适应剪枝,结合增量剪枝策略,在训练过程中逐步剪除不重要的神经元,从而有效提升模型性能。该方法的设计旨在在保持模型性能的同时,降低计算资源消耗。

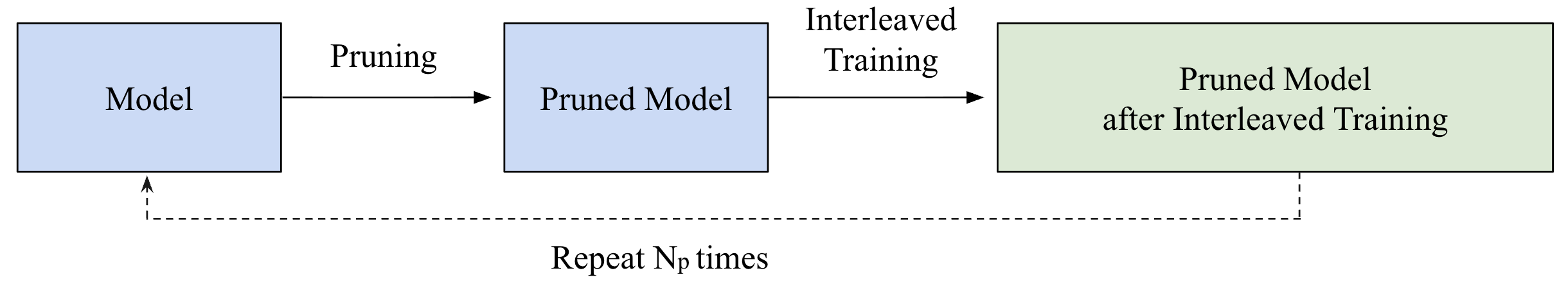

技术框架:Adapt-Pruner的整体架构包括三个主要阶段:首先进行层级自适应剪枝,接着通过增量剪枝逐步优化模型,最后进行进一步训练以恢复和提升模型性能。

关键创新:Adapt-Pruner的核心创新在于其层级自适应剪枝策略,能够在剪枝过程中动态调整剪枝比例,与传统方法相比,显著减少了性能损失。

关键设计:在参数设置上,Adapt-Pruner采用了逐步剪除约5%的神经元,并结合特定的损失函数和网络结构,以确保模型在剪枝后的性能恢复。

🖼️ 关键图片

📊 实验亮点

实验结果表明,Adapt-Pruner在准确率上比传统剪枝方法(如LLM-Pruner、FLAP和SliceGPT)提高了1%-7%。此外,Adapt-Pruner成功将MobileLLM-125M的性能恢复至600M,并发现了一个新的1B模型,在多个基准测试中超越了LLaMA-3.2-1B。

🎯 应用场景

该研究的潜在应用领域包括边缘计算、移动设备和资源受限环境中的自然语言处理任务。通过提高小型语言模型的训练效率,Adapt-Pruner能够为实际应用提供更高效的解决方案,推动智能设备的普及和应用。

📄 摘要(原文)

Small language models (SLMs) have attracted considerable attention from both academia and industry due to their broad range of applications in edge devices. To obtain SLMs with strong performance, conventional approaches either pre-train the models from scratch, which incurs substantial computational costs, or compress/prune existing large language models (LLMs), which results in performance drops and falls short in comparison to pre-training. In this paper, we investigate the family of acceleration methods that involve both structured pruning and model training. We found 1) layer-wise adaptive pruning (Adapt-Pruner) is extremely effective in LLMs and yields significant improvements over existing pruning techniques, 2) adaptive pruning equipped with further training leads to models comparable to those pre-training from scratch, 3) incremental pruning brings non-trivial performance gain by interleaving pruning with training and only removing a small portion of neurons ($\sim$5%) at a time. Experimental results on LLaMA-3.1-8B demonstrate that Adapt-Pruner outperforms conventional pruning methods, such as LLM-Pruner, FLAP, and SliceGPT, by an average of 1%-7% in accuracy on commonsense benchmarks. Additionally, Adapt-Pruner restores the performance of MobileLLM-125M to 600M on the MMLU benchmark with 200$\times$ fewer tokens via pruning from its larger counterparts, and discovers a new 1B model that surpasses LLaMA-3.2-1B in multiple benchmarks. The official code is released at https://github.com/research4pan/AdaptPruner.