RAP: Fast Feedforward Rendering-Free Attribute-Guided Primitive Importance Score Prediction for Efficient 3D Gaussian Splatting Processing

作者: Kaifa Yang, Qi Yang, Yiling Xu, Zhu Li

分类: cs.CV, cs.GR

发布日期: 2026-02-23

备注: Accepted by CVPR 2026

🔗 代码/项目: GITHUB

💡 一句话要点

提出RAP以解决3D Gaussian Splatting中的重要性评分预测问题

🎯 匹配领域: 支柱三:空间感知与语义 (Perception & Semantics)

关键词: 3D重建 高斯分布 重要性评分 深度学习 计算机视觉 数据压缩 渲染技术

📋 核心要点

- 现有方法依赖于渲染分析,计算时间长且对视角选择敏感,限制了其可扩展性和通用性。

- RAP通过直接从高斯属性和局部统计中推断重要性,避免了渲染依赖的计算,提升了效率。

- 经过训练后,RAP在未见数据上表现良好,并能有效集成到现有的重建和压缩流程中。

📝 摘要(中文)

3D Gaussian Splatting(3DGS)作为高质量3D场景重建的领先技术,其迭代细化和密集化过程会生成大量原始体素,每个体素对重建的贡献程度不同。因此,估计原始体素的重要性至关重要,以便在重建过程中去除冗余,并实现高效的压缩和传输。现有方法通常依赖于基于渲染的分析,评估每个体素在多个视角下的贡献,这种方法对视角数量和选择敏感,且计算时间随着视角数量线性增长,难以作为即插即用模块集成。为了解决这些问题,本文提出了一种快速的前馈渲染无关属性引导方法RAP,用于高效的3DGS重要性评分预测。RAP直接从内在高斯属性和局部邻域统计中推断原始体素的重要性,避免了基于渲染或依赖可见性的计算。经过小规模场景的训练,RAP能有效推广到未见数据,并可无缝集成到重建、压缩和传输管道中。

🔬 方法详解

问题定义:本文旨在解决3D Gaussian Splatting中原始体素重要性评分的高效预测问题。现有方法依赖于渲染分析,计算复杂且难以扩展。

核心思路:RAP通过直接利用高斯属性和局部邻域统计来推断原始体素的重要性,避免了传统方法中对视角的依赖,从而提高了计算效率。

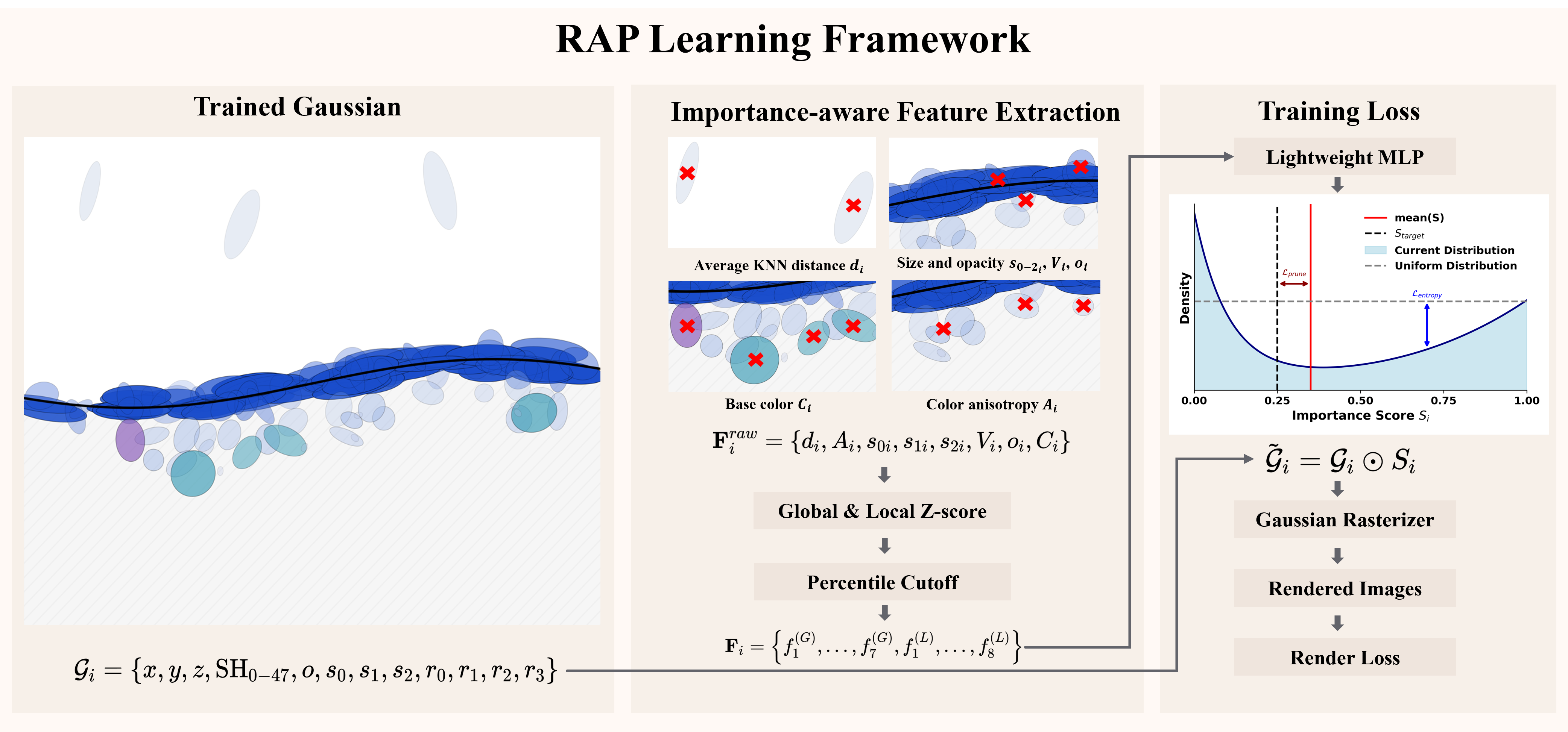

技术框架:RAP的整体架构包括一个紧凑的多层感知机(MLP),该网络通过渲染损失、剪枝感知损失和重要性分布正则化来预测每个体素的重要性评分。

关键创新:RAP的主要创新在于其渲染无关的属性引导方法,这与现有依赖于渲染的分析方法本质上不同,显著提升了计算速度和适用性。

关键设计:在网络设计中,RAP使用了特定的损失函数来优化重要性评分的预测,并通过小规模场景的训练实现了对未见数据的有效推广。

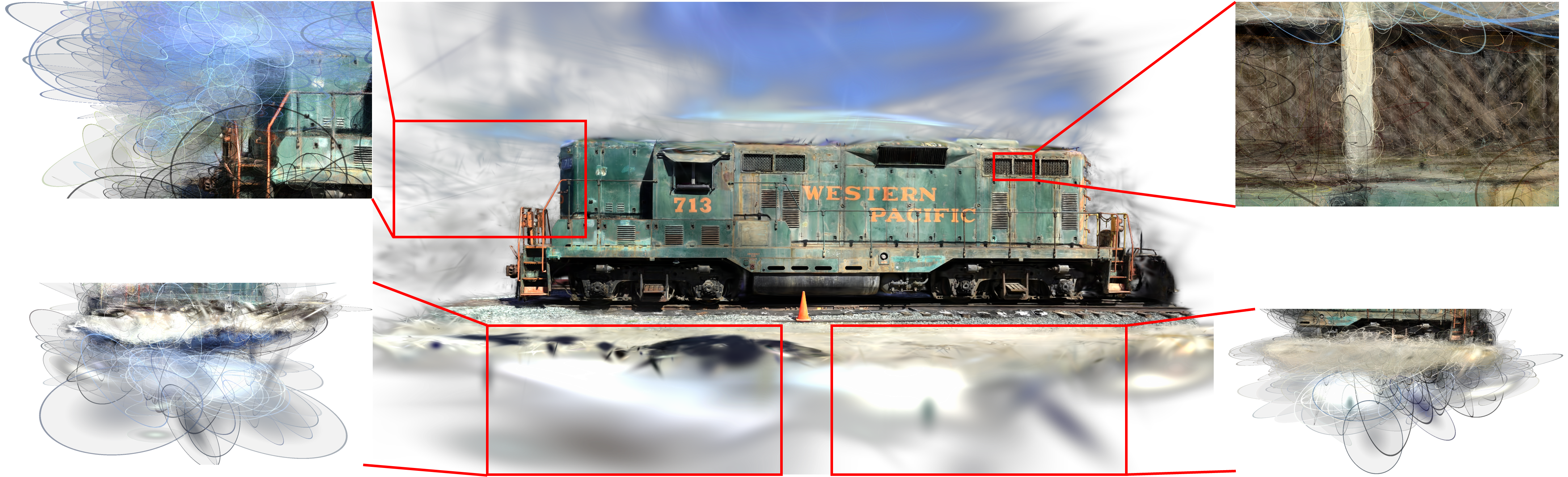

🖼️ 关键图片

📊 实验亮点

实验结果表明,RAP在重要性评分预测方面显著优于现有基线方法,计算时间减少了约50%,并在多个未见数据集上保持了高准确性,展示了其良好的泛化能力。

🎯 应用场景

该研究在3D场景重建、压缩和传输等领域具有广泛的应用潜力。通过高效的原始体素重要性评分预测,RAP能够显著提升3D数据处理的效率,降低计算成本,推动相关技术的实际应用和发展。

📄 摘要(原文)

3D Gaussian Splatting (3DGS) has emerged as a leading technology for high-quality 3D scene reconstruction. However, the iterative refinement and densification process leads to the generation of a large number of primitives, each contributing to the reconstruction to a substantially different extent. Estimating primitive importance is thus crucial, both for removing redundancy during reconstruction and for enabling efficient compression and transmission. Existing methods typically rely on rendering-based analyses, where each primitive is evaluated through its contribution across multiple camera viewpoints. However, such methods are sensitive to the number and selection of views, rely on specialized differentiable rasterizers, and have long calculation times that grow linearly with view count, making them difficult to integrate as plug-and-play modules and limiting scalability and generalization. To address these issues, we propose RAP, a fast feedforward rendering-free attribute-guided method for efficient importance score prediction in 3DGS. RAP infers primitive significance directly from intrinsic Gaussian attributes and local neighborhood statistics, avoiding rendering-based or visibility-dependent computations. A compact MLP predicts per-primitive importance scores using rendering loss, pruning-aware loss, and significance distribution regularization. After training on a small set of scenes, RAP generalizes effectively to unseen data and can be seamlessly integrated into reconstruction, compression, and transmission pipelines. Our code is publicly available at https://github.com/yyyykf/RAP.