SpatialReasoner: Towards Explicit and Generalizable 3D Spatial Reasoning

作者: Wufei Ma, Yu-Cheng Chou, Qihao Liu, Xingrui Wang, Celso de Melo, Jianwen Xie, Alan Yuille

分类: cs.CV

发布日期: 2025-04-28 (更新: 2025-06-10)

备注: Project page: https://spatial-reasoner.github.io

💡 一句话要点

提出SpatialReasoner以解决3D空间推理问题

🎯 匹配领域: 支柱九:具身大模型 (Embodied Foundation Models)

关键词: 3D空间推理 显式表示 多模态模型 视觉语言模型 推理能力 泛化能力 机器人导航 增强现实

📋 核心要点

- 现有的多模态模型在3D空间推理上表现不佳,尤其是在处理人类容易理解的问题时。

- SpatialReasoner通过显式3D表示在多个推理阶段中共享信息,提升了空间推理的准确性和泛化能力。

- 实验结果显示,SpatialReasoner在3DSRBench基准上超越了Gemini 2.0,表现出更强的泛化能力。

📝 摘要(中文)

尽管多模态模型取得了显著进展,3D空间推理仍然是一个挑战性任务。现有方法通常以隐式方式进行空间推理,导致在一些简单问题上表现不佳。本文提出SpatialReasoner,一个新型的大型视觉语言模型,通过在多个阶段共享显式3D表示,增强了3D空间推理的能力,并提高了对新问题类型的泛化能力。实验结果表明,SpatialReasoner在多个空间推理基准上表现优异,超越了Gemini 2.0,提升幅度达到9.2%。

🔬 方法详解

问题定义:本文旨在解决当前多模态模型在3D空间推理中的不足,尤其是隐式推理导致的准确性低下和泛化能力不足的问题。

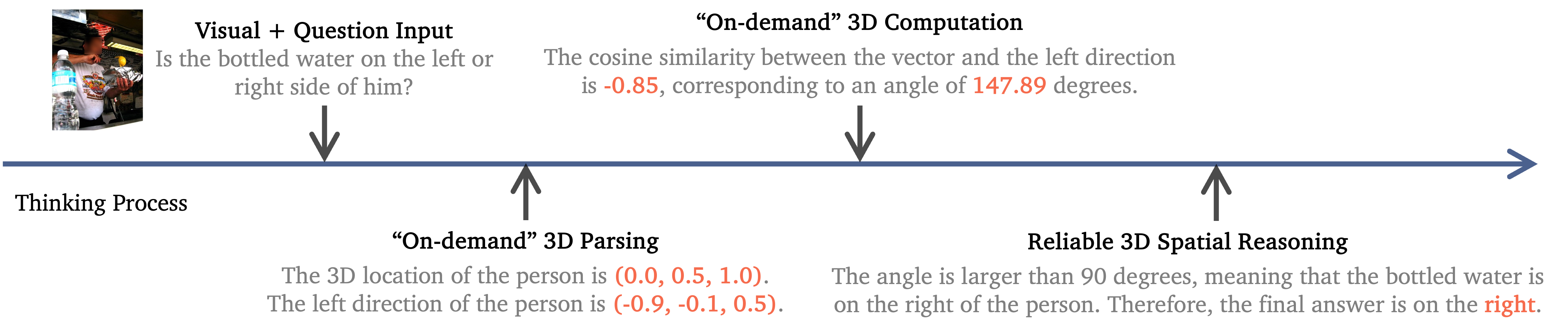

核心思路:SpatialReasoner的核心思想是通过显式3D表示来增强空间推理能力,这种设计使得模型能够在多个推理阶段中共享和利用3D信息。

技术框架:该模型包括三个主要阶段:3D感知、计算和推理。在3D感知阶段,模型提取场景的3D信息;在计算阶段,进行必要的空间计算;在推理阶段,基于3D表示进行逻辑推理。

关键创新:SpatialReasoner的最大创新在于引入显式3D表示,这与现有方法的隐式推理方式形成鲜明对比,显著提高了推理的准确性和效率。

关键设计:模型在参数设置上进行了优化,采用了特定的损失函数来强化3D表示的学习,同时在网络结构上设计了适合3D信息处理的模块。具体细节包括对3D数据的预处理和多层次特征提取。

🖼️ 关键图片

📊 实验亮点

在多个空间推理基准测试中,SpatialReasoner的表现显著优于Gemini 2.0,提升幅度达到9.2%。此外,该模型在处理新类型的3D空间推理问题时展现出更强的泛化能力,标志着在该领域的重要进展。

🎯 应用场景

SpatialReasoner在机器人导航、增强现实和自动驾驶等领域具有广泛的应用潜力。通过提升3D空间推理能力,该模型能够更好地理解和处理复杂的空间关系,从而推动相关技术的发展和应用。未来,该研究可能促进更智能的交互系统和自主决策能力的实现。

📄 摘要(原文)

Despite recent advances on multi-modal models, 3D spatial reasoning remains a challenging task for state-of-the-art open-source and proprietary models. Recent studies explore data-driven approaches and achieve enhanced spatial reasoning performance by fine-tuning models on 3D-related visual question-answering data. However, these methods typically perform spatial reasoning in an implicit manner and often fail on questions that are trivial to humans, even with long chain-of-thought reasoning. In this work, we introduce SpatialReasoner, a novel large vision-language model (LVLM) that addresses 3D spatial reasoning with explicit 3D representations shared between multiple stages--3D perception, computation, and reasoning. Explicit 3D representations provide a coherent interface that supports advanced 3D spatial reasoning and improves the generalization ability to novel question types. Furthermore, by analyzing the explicit 3D representations in multi-step reasoning traces of SpatialReasoner, we study the factual errors and identify key shortcomings of current LVLMs. Results show that our SpatialReasoner achieves improved performance on a variety of spatial reasoning benchmarks, outperforming Gemini 2.0 by 9.2% on 3DSRBench, and generalizes better when evaluating on novel 3D spatial reasoning questions. Our study bridges the 3D parsing capabilities of prior visual foundation models with the powerful reasoning abilities of large language models, opening new directions for 3D spatial reasoning.