EmbodiedOcc++: Boosting Embodied 3D Occupancy Prediction with Plane Regularization and Uncertainty Sampler

作者: Hao Wang, Xiaobao Wei, Xiaoan Zhang, Jianing Li, Chengyu Bai, Ying Li, Ming Lu, Wenzhao Zheng, Shanghang Zhang

分类: cs.CV

发布日期: 2025-04-13 (更新: 2025-07-25)

备注: Accepted by ACM MM 2025

🔗 代码/项目: GITHUB

💡 一句话要点

提出EmbodiedOcc++以解决室内三维占用预测中的几何特征不足问题

🎯 匹配领域: 支柱七:动作重定向 (Motion Retargeting)

关键词: 三维占用预测 几何引导 语义感知 高斯模型 在线感知 机器人导航 智能家居

📋 核心要点

- 现有的EmbodiedOcc框架未能充分利用室内环境的几何特征,导致占用预测的准确性不足。

- 本文提出的EmbodiedOcc++通过引入几何引导的细化模块和语义感知的不确定性采样器,增强了高斯更新的几何一致性。

- 在EmbodiedOcc-ScanNet基准上的实验结果显示,EmbodiedOcc++在多个设置下均实现了最先进的性能,提升了边缘精度和几何细节保留。

📝 摘要(中文)

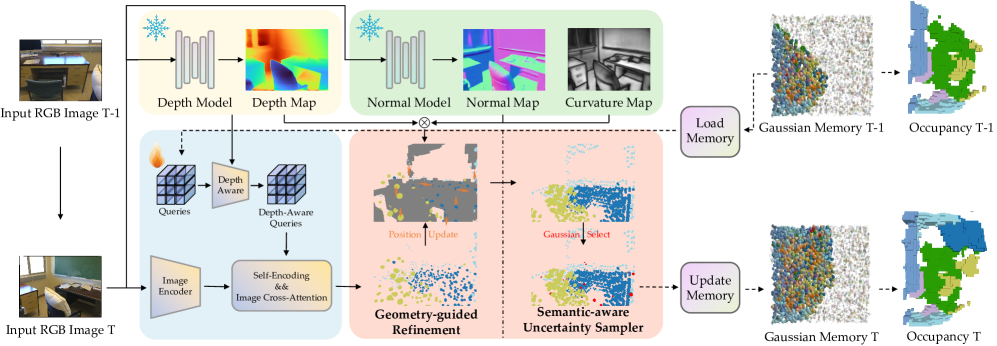

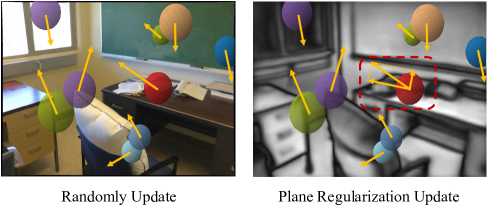

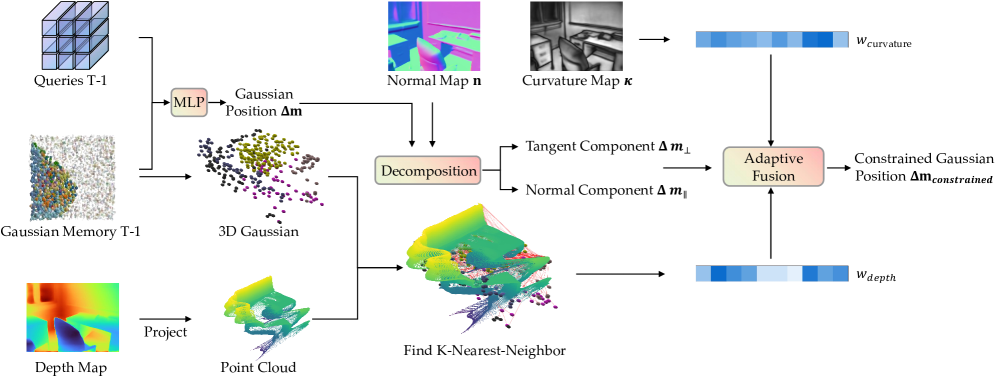

在线三维占用预测为具身环境提供了全面的空间理解。尽管创新的EmbodiedOcc框架利用三维语义高斯进行逐步室内占用预测,但忽视了室内环境的几何特征,主要由平面结构构成。本文提出了EmbodiedOcc++,通过两个关键创新增强了原始框架:几何引导的细化模块(GRM),通过平面正则化约束高斯更新,以及语义感知的不确定性采样器(SUS),使得在连续帧之间重叠区域的更新更为有效。GRM通过曲率和深度约束自适应确定正则化权重,使语义高斯准确对齐平面表面。全面的实验表明,EmbodiedOcc++在不同设置下实现了最先进的性能,提升了边缘精度并保留了更多几何细节,同时确保了计算效率。

🔬 方法详解

问题定义:本文旨在解决现有EmbodiedOcc框架在室内三维占用预测中对几何特征的忽视,导致的预测准确性不足的问题。

核心思路:通过引入几何引导的细化模块(GRM)和语义感知的不确定性采样器(SUS),实现对高斯更新的几何约束和更有效的重叠区域更新,从而提升预测的准确性和几何一致性。

技术框架:整体架构包括两个主要模块:GRM用于通过平面正则化约束高斯更新,SUS用于自适应选择合适的高斯进行更新。整个流程从输入的三维数据开始,通过这两个模块进行处理,最终输出更准确的占用预测结果。

关键创新:最重要的技术创新在于GRM和SUS的结合,GRM通过曲率和深度约束自适应调整正则化权重,使得高斯能够准确对齐平面,而SUS则提高了在复杂区域的更新效率。这与现有方法的本质区别在于更好地考虑了几何特征。

关键设计:在GRM中,正则化权重的自适应计算是基于曲率和深度信息的;在SUS中,选择合适高斯的策略则是基于语义信息的。这些设计使得模型在处理复杂环境时能够保持较高的几何一致性和准确性。

🖼️ 关键图片

📊 实验亮点

在EmbodiedOcc-ScanNet基准上的实验结果显示,EmbodiedOcc++在不同设置下均实现了最先进的性能,边缘精度提升显著,几何细节保留率提高,计算效率保持在合理范围内,展示了其在在线具身感知中的应用潜力。

🎯 应用场景

该研究的潜在应用领域包括智能家居、机器人导航和增强现实等。通过提升三维占用预测的准确性,EmbodiedOcc++能够为机器人和智能设备提供更可靠的环境理解,从而改善其在复杂环境中的操作能力和交互体验。未来,该方法可能推动更智能的自动化系统的发展。

📄 摘要(原文)

Online 3D occupancy prediction provides a comprehensive spatial understanding of embodied environments. While the innovative EmbodiedOcc framework utilizes 3D semantic Gaussians for progressive indoor occupancy prediction, it overlooks the geometric characteristics of indoor environments, which are primarily characterized by planar structures. This paper introduces EmbodiedOcc++, enhancing the original framework with two key innovations: a Geometry-guided Refinement Module (GRM) that constrains Gaussian updates through plane regularization, along with a Semantic-aware Uncertainty Sampler (SUS) that enables more effective updates in overlapping regions between consecutive frames. GRM regularizes the position update to align with surface normals. It determines the adaptive regularization weight using curvature-based and depth-based constraints, allowing semantic Gaussians to align accurately with planar surfaces while adapting in complex regions. To effectively improve geometric consistency from different views, SUS adaptively selects proper Gaussians to update. Comprehensive experiments on the EmbodiedOcc-ScanNet benchmark demonstrate that EmbodiedOcc++ achieves state-of-the-art performance across different settings. Our method demonstrates improved edge accuracy and retains more geometric details while ensuring computational efficiency, which is essential for online embodied perception. The code will be released at: https://github.com/PKUHaoWang/EmbodiedOcc2.