DELT: A Simple Diversity-driven EarlyLate Training for Dataset Distillation

作者: Zhiqiang Shen, Ammar Sherif, Zeyuan Yin, Shitong Shao

分类: cs.CV, cs.AI, cs.LG

发布日期: 2024-11-29 (更新: 2025-06-06)

备注: CVPR 2025

🔗 代码/项目: GITHUB

💡 一句话要点

提出DELT以解决数据集蒸馏中的多样性不足问题

🎯 匹配领域: 支柱二:RL算法与架构 (RL & Architecture)

关键词: 数据集蒸馏 多样性驱动 局部优化 图像合成 深度学习

📋 核心要点

- 现有的全局匹配方法在合成样本多样性方面存在不足,导致同类样本之间缺乏变化。

- 本文提出的DELT方案通过局部优化和子任务划分,增强了合成图像的多样性,提升了训练效率。

- 在多个数据集上进行的实验表明,DELT方法在性能上超越了现有的最先进技术,且合成时间显著减少。

📝 摘要(中文)

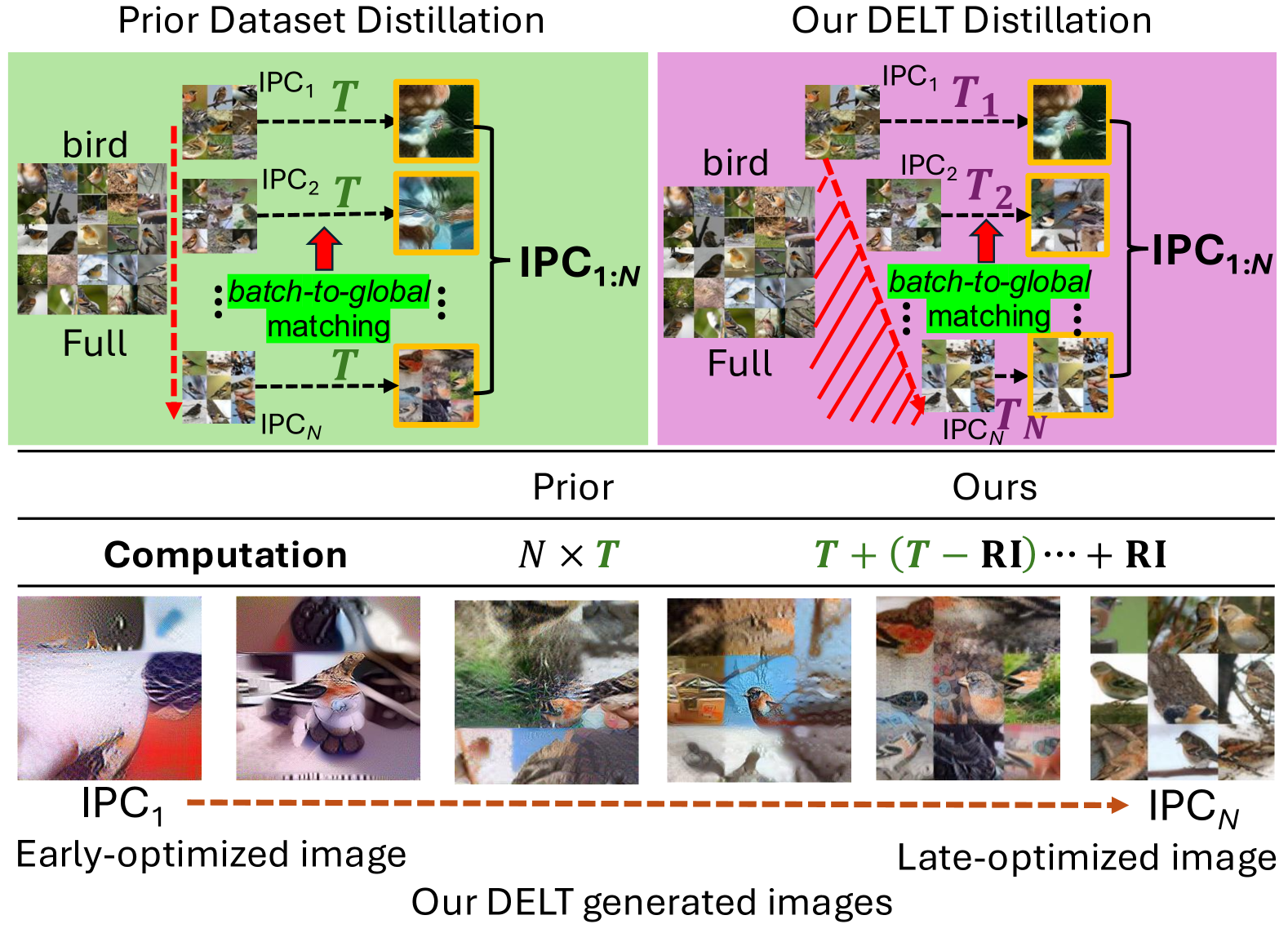

近年来,数据集蒸馏的进展主要集中在两种方向上:批次匹配和全局匹配。后者在大规模数据集上表现出色,但面临合成样本多样性不足的挑战。本文提出了一种新的多样性驱动的早晚训练(DELT)方案,通过将预定义的样本划分为更小的子任务,采用局部优化来提高合成图像的多样性。实验结果表明,该方法在CIFAR、Tiny-ImageNet和ImageNet-1K等数据集上,平均提升了2%至5%的性能,同时合成时间减少了39.3%。

🔬 方法详解

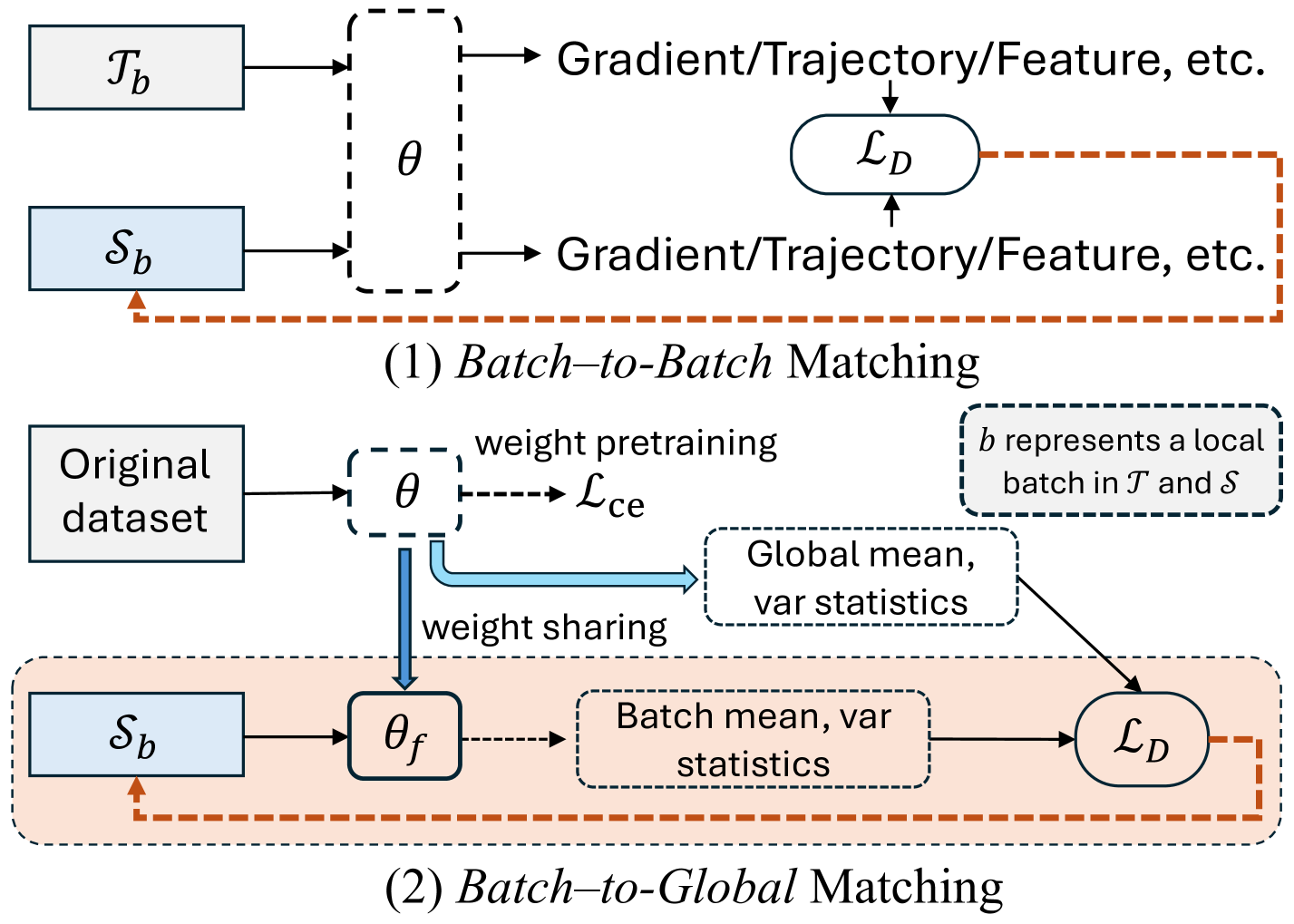

问题定义:本文旨在解决数据集蒸馏中合成样本多样性不足的问题。现有的全局匹配方法在优化过程中,样本独立优化导致同类样本缺乏多样性,影响了模型的泛化能力。

核心思路:DELT方案通过将预定义的样本划分为更小的子任务,采用局部优化来提高每个子集的多样性,从而减少统一优化过程带来的样本均匀性。

技术框架:该方法的整体架构包括样本划分、局部优化和全局合成三个主要模块。首先,将样本划分为多个子任务,然后对每个子任务进行独立优化,最后将优化后的结果合成到全局任务中。

关键创新:DELT的主要创新在于通过多样性驱动的局部优化策略,显著提高了合成样本的多样性。这一方法与传统的全局优化方法本质上不同,后者往往导致样本的同质化。

关键设计:在参数设置上,DELT采用了灵活的子任务划分策略,并在损失函数中引入了多样性相关的权重,以促进不同阶段的样本分布。此外,网络结构上保持了与现有方法的一致性,以便于与传统方法的比较。

🖼️ 关键图片

📊 实验亮点

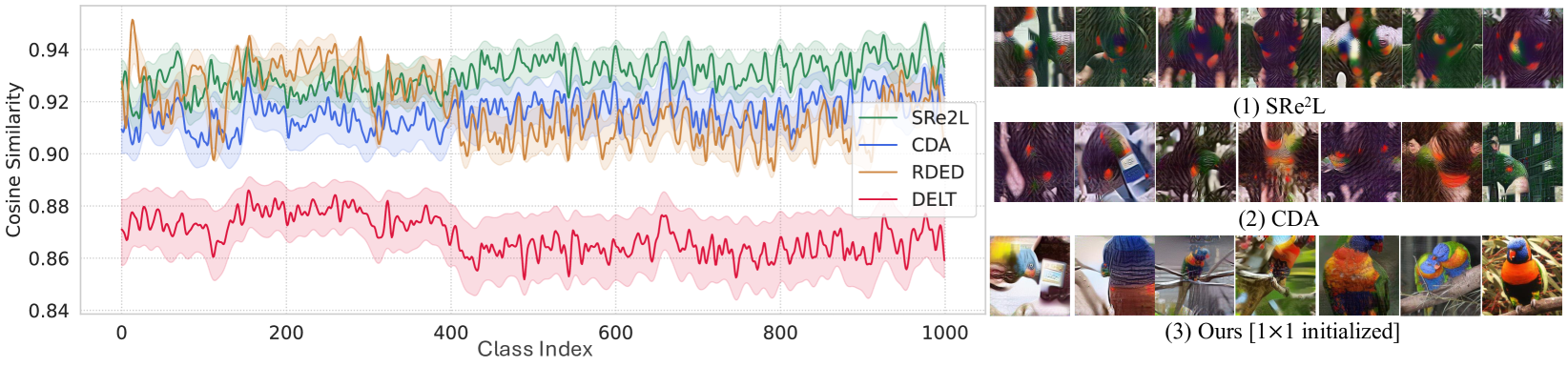

在CIFAR、Tiny-ImageNet和ImageNet-1K等数据集上的实验结果显示,DELT方法在性能上平均提升了2%至5%,同时合成时间减少了高达39.3%。这些结果表明,DELT在提高训练效率和样本多样性方面具有显著优势。

🎯 应用场景

DELT方法在数据集蒸馏领域具有广泛的应用潜力,尤其适用于大规模图像分类任务。通过提高样本的多样性,该方法能够有效提升模型的泛化能力,适用于计算机视觉、自动驾驶和机器人等多个领域,未来可能推动相关技术的进一步发展。

📄 摘要(原文)

Recent advances in dataset distillation have led to solutions in two main directions. The conventional batch-to-batch matching mechanism is ideal for small-scale datasets and includes bi-level optimization methods on models and syntheses, such as FRePo, RCIG, and RaT-BPTT, as well as other methods like distribution matching, gradient matching, and weight trajectory matching. Conversely, batch-to-global matching typifies decoupled methods, which are particularly advantageous for large-scale datasets. This approach has garnered substantial interest within the community, as seen in SRe$^2$L, G-VBSM, WMDD, and CDA. A primary challenge with the second approach is the lack of diversity among syntheses within each class since samples are optimized independently and the same global supervision signals are reused across different synthetic images. In this study, we propose a new Diversity-driven EarlyLate Training (DELT) scheme to enhance the diversity of images in batch-to-global matching with less computation. Our approach is conceptually simple yet effective, it partitions predefined IPC samples into smaller subtasks and employs local optimizations to distill each subset into distributions from distinct phases, reducing the uniformity induced by the unified optimization process. These distilled images from the subtasks demonstrate effective generalization when applied to the entire task. We conduct extensive experiments on CIFAR, Tiny-ImageNet, ImageNet-1K, and its sub-datasets. Our approach outperforms the previous state-of-the-art by 2$\sim$5% on average across different datasets and IPCs (images per class), increasing diversity per class by more than 5% while reducing synthesis time by up to 39.3% for enhancing the training efficiency. Code is available at: https://github.com/VILA-Lab/DELT.