ERNIE 5.0 Technical Report

作者: Haifeng Wang, Hua Wu, Tian Wu, Yu Sun, Jing Liu, Dianhai Yu, Yanjun Ma, Jingzhou He, Zhongjun He, Dou Hong, Qiwen Liu, Shuohuan Wang, Junyuan Shang, Zhenyu Zhang, Yuchen Ding, Jinle Zeng, Jiabin Yang, Liang Shen, Ruibiao Chen, Weichong Yin, Siyu Ding, Dai Dai, Shikun Feng, Siqi Bao, Bolei He, Yan Chen, Zhenyu Jiao, Ruiqing Zhang, Zeyu Chen, Qingqing Dang, Kaipeng Deng, Jiajun Jiang, Enlei Gong, Guoxia Wang, Yanlin Sha, Yi Liu, Yehan Zheng, Weijian Xu, Jiaxiang Liu, Zengfeng Zeng, Yingqi Qu, Zhongli Li, Zhengkun Zhang, Xiyang Wang, Zixiang Xu, Xinchao Xu, Zhengjie Huang, Dong Wang, Bingjin Chen, Yue Chang, Xing Yuan, Shiwei Huang, Qiao Zhao, Xinzhe Ding, Shuangshuang Qiao, Baoshan Yang, Bihong Tang, Bin Li, Bingquan Wang, Binhan Tang, Binxiong Zheng, Bo Cui, Bo Ke, Bo Zhang, Bowen Zhang, Boyan Zhang, Boyang Liu, Caiji Zhang, Can Li, Chang Xu, Chao Pang, Chao Zhang, Chaoyi Yuan, Chen Chen, Cheng Cui, Chenlin Yin, Chun Gan, Chunguang Chai, Chuyu Fang, Cuiyun Han, Dan Zhang, Danlei Feng, Danxiang Zhu, Dong Sun, Dongbo Li, Dongdong Li, Dongdong Liu, Dongxue Liu, Fan Ding, Fan Hu, Fan Li, Fan Mo, Feisheng Wu, Fengwei Liu, Gangqiang Hu, Gaofeng Lu, Gaopeng Yong, Gexiao Tian, Guan Wang, Guangchen Ni, Guangshuo Wu, Guanzhong Wang, Guihua Liu, Guishun Li, Haibin Li, Haijian Liang, Haipeng Ming, Haisu Wang, Haiyang Lu, Haiye Lin, Han Zhou, Hangting Lou, Hanwen Du, Hanzhi Zhang, Hao Chen, Hao Du, Hao Liu, Hao Zhou, Haochen Jiang, Haodong Tian, Haoshuang Wang, Haozhe Geng, Heju Yin, Hong Chen, Hongchen Xue, Hongen Liu, Honggeng Zhang, Hongji Xu, Hongwei Chen, Hongyang Zhang, Hongyuan Zhang, Hua Lu, Huan Chen, Huan Wang, Huang He, Hui Liu, Hui Zhong, Huibin Ruan, Jiafeng Lu, Jiage Liang, Jiahao Hu, Jiahao Hu, Jiajie Yang, Jialin Li, Jian Chen, Jian Wu, Jianfeng Yang, Jianguang Jiang, Jianhua Wang, Jianye Chen, Jiaodi Liu, Jiarui Zhou, Jiawei Lv, Jiaxin Zhou, Jiaxuan Liu, Jie Han, Jie Sun, Jiefan Fang, Jihan Liu, Jihua Liu, Jing Hu, Jing Qian, Jing Yan, Jingdong Du, Jingdong Wang, Jingjing Wu, Jingyong Li, Jinheng Wang, Jinjin Li, Jinliang Lu, Jinlin Yu, Jinnan Liu, Jixiang Feng, Jiyi Huang, Jiyuan Zhang, Jun Liang, Jun Xia, Jun Yu, Junda Chen, Junhao Feng, Junhong Xiang, Junliang Li, Kai Liu, Kailun Chen, Kairan Su, Kang Hu, Kangkang Zhou, Ke Chen, Ke Wei, Kui Huang, Kun Wu, Kunbin Chen, Lei Han, Lei Sun, Lei Wen, Linghui Meng, Linhao Yu, Liping Ouyang, Liwen Zhang, Longbin Ji, Longzhi Wang, Meng Sun, Meng Tian, Mengfei Li, Mengqi Zeng, Mengyu Zhang, Ming Hong, Mingcheng Zhou, Mingming Huang, Mingxin Chen, Mingzhu Cai, Naibin Gu, Nemin Qiu, Nian Wang, Peng Qiu, Peng Zhao, Pengyu Zou, Qi Wang, Qi Xin, Qian Wang, Qiang Zhu, Qianhui Luo, Qianwei Yang, Qianyue He, Qifei Wu, Qinrui Li, Qiwen Bao, Quan Zhang, Quanxiang Liu, Qunyi Xie, Rongrui Zhan, Rufeng Dai, Rui Peng, Ruian Liu, Ruihao Xu, Ruijie Wang, Ruixi Zhang, Ruixuan Liu, Runsheng Shi, Ruting Wang, Senbo Kang, Shan Lu, Shaofei Yu, Shaotian Gong, Shenwei Hu, Shifeng Zheng, Shihao Guo, Shilong Fan, Shiqin Liu, Shiwei Gu, Shixi Zhang, Shuai Yao, Shuang Zhang, Shuangqiao Liu, Shuhao Liang, Shuwei He, Shuwen Yang, Sijun He, Siming Dai, Siming Wu, Siyi Long, Songhe Deng, Suhui Dong, Suyin Liang, Teng Hu, Tianchan Xu, Tianliang Lv, Tianmeng Yang, Tianyi Wei, Tiezhu Gao, Ting Sun, Ting Zhang, Tingdan Luo, Wei He, Wei Luan, Wei Yin, Wei Zhang, Wei Zhou, Weibao Gong, Weibin Li, Weicheng Huang, Weichong Dang, Weiguo Zhu, Weilong Zhang, Weiqi Tan, Wen Huang, Wenbin Chang, Wenjing Du, Wenlong Miao, Wenpei Luo, Wenquan Wu, Xi Shi, Xi Zhao, Xiang Gao, Xiangguo Zhang, Xiangrui Yu, Xiangsen Wang, Xiangzhe Wang, Xianlong Luo, Xianying Ma, Xiao Tan, Xiaocong Lin, Xiaofei Wang, Xiaofeng Peng, Xiaofeng Wu, Xiaojian Xu, Xiaolan Yuan, Xiaopeng Cui, Xiaotian Han, Xiaoxiong Liu, Xiaoxu Fei, Xiaoxuan Wu, Xiaoyu Wang, Xiaoyu Zhang, Xin Sun, Xin Wang, Xinhui Huang, Xinming Zhu, Xintong Yu, Xinyi Xu, Xinyu Wang, Xiuxian Li, XuanShi Zhu, Xue Xu, Xueying Lv, Xuhong Li, Xulong Wei, Xuyi Chen, Yabing Shi, Yafeng Wang, Yamei Li, Yan Liu, Yanfu Cheng, Yang Gao, Yang Liang, Yang Wang, Yang Wang, Yang Yang, Yanlong Liu, Yannian Fu, Yanpeng Wang, Yanzheng Lin, Yao Chen, Yaozong Shen, Yaqian Han, Yehua Yang, Yekun Chai, Yesong Wang, Yi Song, Yichen Zhang, Yifei Wang, Yifeng Guo, Yifeng Kou, Yilong Chen, Yilong Guo, Yiming Wang, Ying Chen, Ying Wang, Yingsheng Wu, Yingzhan Lin, Yinqi Yang, Yiran Xing, Yishu Lei, Yixiang Tu, Yiyan Chen, Yong Zhang, Yonghua Li, Yongqiang Ma, Yongxing Dai, Yongyue Zhang, Yu Ran, Yu Sun, Yu-Wen Michael Zhang, Yuang Liu, Yuanle Liu, Yuanyuan Zhou, Yubo Zhang, Yuchen Han, Yucheng Wang, Yude Gao, Yuedong Luo, Yuehu Dong, Yufeng Hu, Yuhui Cao, Yuhui Yun, Yukun Chen, Yukun Gao, Yukun Li, Yumeng Zhang, Yun Fan, Yun Ma, Yunfei Zhang, Yunshen Xie, Yuping Xu, Yuqin Zhang, Yuqing Liu, Yurui Li, Yuwen Wang, Yuxiang Lu, Zefeng Cai, Zelin Zhao, Zelun Zhang, Zenan Lin, Zezhao Dong, Zhaowu Pan, Zhaoyu Liu, Zhe Dong, Zhe Zhang, Zhen Zhang, Zhengfan Wu, Zhengrui Wei, Zhengsheng Ning, Zhenxing Li, Zhenyu Li, Zhenyu Qian, Zhenyun Li, Zhi Li, Zhichao Chen, Zhicheng Dong, Zhida Feng, Zhifan Feng, Zhihao Deng, Zhijin Yu, Zhiyang Chen, Zhonghui Zheng, Zhuangzhuang Guo, Zhujun Zhang, Zhuo Sun, Zichang Liu, Zihan Lin, Zihao Huang, Zihe Zhu, Ziheng Zhao, Ziping Chen, Zixuan Zhu, Ziyang Xu, Ziyi Liang, Ziyuan Gao

分类: cs.CL

发布日期: 2026-02-04

💡 一句话要点

ERNIE 5.0:首个公开的万亿参数统一多模态自回归模型,支持理解与生成

🎯 匹配领域: 支柱二:RL算法与架构 (RL & Architecture) 支柱九:具身大模型 (Embodied Foundation Models)

关键词: 多模态学习 自回归模型 混合专家模型 弹性训练 统一模型 跨模态生成 Transformer 深度学习

📋 核心要点

- 现有方法难以在统一框架下处理多种模态的数据,且模型部署面临资源约束和性能权衡的挑战。

- ERNIE 5.0 采用统一的自回归架构和混合专家模型,通过模态无关的专家路由实现多模态理解和生成。

- 该模型通过弹性训练学习一系列子模型,在不同资源约束下实现性能、模型大小和推理延迟的灵活权衡,并在多模态任务上表现出色。

📝 摘要(中文)

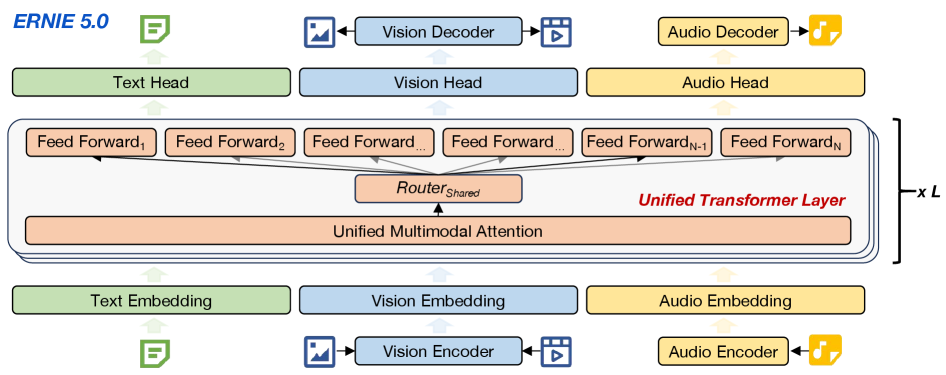

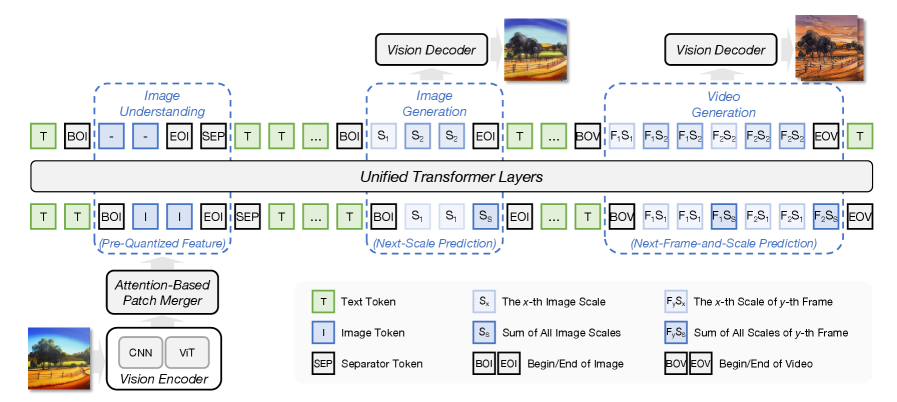

本报告介绍了ERNIE 5.0,一个原生自回归基础模型,旨在实现跨文本、图像、视频和音频的统一多模态理解和生成。所有模态都基于统一的下一组token预测目标,以及具有模态无关专家路由的超稀疏混合专家(MoE)架构从头开始训练。为了解决在不同资源约束下大规模部署的实际挑战,ERNIE 5.0采用了一种新颖的弹性训练范式。在单个预训练过程中,模型学习一系列具有不同深度、专家容量和路由稀疏度的子模型,从而在内存或时间受限的情况下,能够在性能、模型大小和推理延迟之间进行灵活的权衡。此外,我们系统地解决了将强化学习扩展到统一基础模型的挑战,从而保证了在超稀疏MoE架构和多样化的多模态设置下高效且稳定的后训练。大量实验表明,ERNIE 5.0在多个模态上实现了强大且均衡的性能。据我们所知,在公开的模型中,ERNIE 5.0是首个生产规模的万亿参数统一自回归模型,支持多模态理解和生成。为了促进进一步的研究,我们展示了统一模型中模态无关专家路由的详细可视化,以及弹性训练的全面经验分析,旨在为社区提供深刻的见解。

🔬 方法详解

问题定义:现有的大型模型通常针对特定模态设计,难以实现跨模态的统一理解和生成。此外,将这些模型部署到资源受限的环境中,需要在模型大小、推理速度和性能之间进行权衡。现有方法难以在统一框架下兼顾多模态处理能力和部署效率。

核心思路:ERNIE 5.0 的核心思路是构建一个统一的自回归模型,能够同时处理文本、图像、视频和音频等多种模态的数据。通过采用混合专家(MoE)架构和模态无关的专家路由,模型可以根据输入数据的模态动态地选择合适的专家进行处理,从而实现高效的多模态学习。此外,弹性训练范式允许模型学习一系列不同大小和复杂度的子模型,以适应不同的资源约束。

技术框架:ERNIE 5.0 的整体架构基于自回归Transformer模型,并引入了混合专家(MoE)层。模型首先将不同模态的输入数据转换为统一的token表示,然后通过Transformer层进行处理。在MoE层,模型根据输入数据的模态和内容,通过专家路由机制选择合适的专家进行处理。最后,模型预测下一个token,实现多模态的生成。弹性训练则是在预训练阶段,通过调整模型的深度、专家容量和路由稀疏度,学习一系列子模型。

关键创新:ERNIE 5.0 的关键创新在于:1) 统一的多模态自回归架构,能够同时处理多种模态的数据;2) 模态无关的专家路由机制,能够根据输入数据的模态动态地选择合适的专家;3) 弹性训练范式,允许模型学习一系列不同大小和复杂度的子模型,以适应不同的资源约束。

关键设计:ERNIE 5.0 采用了超稀疏的MoE架构,以降低计算成本。专家路由机制基于可学习的路由权重,根据输入数据的模态和内容动态地选择专家。弹性训练通过调整模型的深度(Transformer层数)、专家容量(每个专家的参数量)和路由稀疏度(选择的专家数量)来实现。损失函数采用标准的交叉熵损失,并引入正则化项以鼓励专家之间的负载均衡。

🖼️ 关键图片

📊 实验亮点

ERNIE 5.0 在多个多模态任务上取得了显著的性能提升。例如,在图像描述生成任务中,ERNIE 5.0 的性能超过了现有的最佳模型。此外,弹性训练使得 ERNIE 5.0 能够在不同的资源约束下实现灵活的性能权衡,例如,在计算资源有限的情况下,可以选择较小的子模型,以牺牲少量性能为代价,获得更快的推理速度。

🎯 应用场景

ERNIE 5.0 具有广泛的应用前景,包括多模态内容生成(如根据文本描述生成图像或视频)、跨模态信息检索、智能客服、以及各种需要理解和生成多种模态数据的任务。该模型能够有效降低多模态应用开发的成本,并提升用户体验,有望推动人工智能在更多领域的应用。

📄 摘要(原文)

In this report, we introduce ERNIE 5.0, a natively autoregressive foundation model desinged for unified multimodal understanding and generation across text, image, video, and audio. All modalities are trained from scratch under a unified next-group-of-tokens prediction objective, based on an ultra-sparse mixture-of-experts (MoE) architecture with modality-agnostic expert routing. To address practical challenges in large-scale deployment under diverse resource constraints, ERNIE 5.0 adopts a novel elastic training paradigm. Within a single pre-training run, the model learns a family of sub-models with varying depths, expert capacities, and routing sparsity, enabling flexible trade-offs among performance, model size, and inference latency in memory- or time-constrained scenarios. Moreover, we systematically address the challenges of scaling reinforcement learning to unified foundation models, thereby guaranteeing efficient and stable post-training under ultra-sparse MoE architectures and diverse multimodal settings. Extensive experiments demonstrate that ERNIE 5.0 achieves strong and balanced performance across multiple modalities. To the best of our knowledge, among publicly disclosed models, ERNIE 5.0 represents the first production-scale realization of a trillion-parameter unified autoregressive model that supports both multimodal understanding and generation. To facilitate further research, we present detailed visualizations of modality-agnostic expert routing in the unified model, alongside comprehensive empirical analysis of elastic training, aiming to offer profound insights to the community.