Nemotron 3 Nano: Open, Efficient Mixture-of-Experts Hybrid Mamba-Transformer Model for Agentic Reasoning

作者: NVIDIA, :, Aaron Blakeman, Aaron Grattafiori, Aarti Basant, Abhibha Gupta, Abhinav Khattar, Adi Renduchintala, Aditya Vavre, Akanksha Shukla, Akhiad Bercovich, Aleksander Ficek, Aleksandr Shaposhnikov, Alex Kondratenko, Alexander Bukharin, Alexandre Milesi, Ali Taghibakhshi, Alisa Liu, Amelia Barton, Ameya Sunil Mahabaleshwarkar, Amir Klein, Amit Zuker, Amnon Geifman, Amy Shen, Anahita Bhiwandiwalla, Andrew Tao, Ann Guan, Anubhav Mandarwal, Arham Mehta, Ashwath Aithal, Ashwin Poojary, Asif Ahamed, Asma Kuriparambil Thekkumpate, Ayush Dattagupta, Banghua Zhu, Bardiya Sadeghi, Barnaby Simkin, Ben Lanir, Benedikt Schifferer, Besmira Nushi, Bilal Kartal, Bita Darvish Rouhani, Boris Ginsburg, Brandon Norick, Brandon Soubasis, Branislav Kisacanin, Brian Yu, Bryan Catanzaro, Carlo del Mundo, Chantal Hwang, Charles Wang, Cheng-Ping Hsieh, Chenghao Zhang, Chenhan Yu, Chetan Mungekar, Chintan Patel, Chris Alexiuk, Christopher Parisien, Collin Neale, Damon Mosk-Aoyama, Dan Su, Dane Corneil, Daniel Afrimi, Daniel Rohrer, Daniel Serebrenik, Daria Gitman, Daria Levy, Darko Stosic, David Mosallanezhad, Deepak Narayanan, Dhruv Nathawani, Dima Rekesh, Dina Yared, Divyanshu Kakwani, Dong Ahn, Duncan Riach, Dusan Stosic, Edgar Minasyan, Edward Lin, Eileen Long, Eileen Peters Long, Elena Lantz, Ellie Evans, Elliott Ning, Eric Chung, Eric Harper, Eric Tramel, Erick Galinkin, Erik Pounds, Evan Briones, Evelina Bakhturina, Faisal Ladhak, Fay Wang, Fei Jia, Felipe Soares, Feng Chen, Ferenc Galko, Frankie Siino, Gal Hubara Agam, Ganesh Ajjanagadde, Gantavya Bhatt, Gargi Prasad, George Armstrong, Gerald Shen, Gorkem Batmaz, Grigor Nalbandyan, Haifeng Qian, Harsh Sharma, Hayley Ross, Helen Ngo, Herman Sahota, Hexin Wang, Himanshu Soni, Hiren Upadhyay, Huizi Mao, Huy C Nguyen, Huy Q Nguyen, Iain Cunningham, Ido Shahaf, Igor Gitman, Ilya Loshchilov, Ivan Moshkov, Izzy Putterman, Jan Kautz, Jane Polak Scowcroft, Jared Casper, Jatin Mitra, Jeffrey Glick, Jenny Chen, Jesse Oliver, Jian Zhang, Jiaqi Zeng, Jie Lou, Jimmy Zhang, Jining Huang, Joey Conway, Joey Guman, John Kamalu, Johnny Greco, Jonathan Cohen, Joseph Jennings, Joyjit Daw, Julien Veron Vialard, Junkeun Yi, Jupinder Parmar, Kai Xu, Kan Zhu, Kari Briski, Katherine Cheung, Katherine Luna, Keshav Santhanam, Kevin Shih, Kezhi Kong, Khushi Bhardwaj, Krishna C. Puvvada, Krzysztof Pawelec, Kumar Anik, Lawrence McAfee, Laya Sleiman, Leon Derczynski, Li Ding, Lucas Liebenwein, Luis Vega, Maanu Grover, Maarten Van Segbroeck, Maer Rodrigues de Melo, Makesh Narsimhan Sreedhar, Manoj Kilaru, Maor Ashkenazi, Marc Romeijn, Mark Cai, Markus Kliegl, Maryam Moosaei, Matvei Novikov, Mehrzad Samadi, Melissa Corpuz, Mengru Wang, Meredith Price, Michael Boone, Michael Evans, Miguel Martinez, Mike Chrzanowski, Mohammad Shoeybi, Mostofa Patwary, Nabin Mulepati, Natalie Hereth, Nave Assaf, Negar Habibi, Neta Zmora, Netanel Haber, Nicola Sessions, Nidhi Bhatia, Nikhil Jukar, Nikki Pope, Nikolai Ludwig, Nima Tajbakhsh, Nirmal Juluru, Oleksii Hrinchuk, Oleksii Kuchaiev, Olivier Delalleau, Oluwatobi Olabiyi, Omer Ullman Argov, Ouye Xie, Parth Chadha, Pasha Shamis, Pavlo Molchanov, Pawel Morkisz, Peter Dykas, Peter Jin, Pinky Xu, Piotr Januszewski, Pranav Prashant Thombre, Prasoon Varshney, Pritam Gundecha, Qing Miao, Rabeeh Karimi Mahabadi, Ran El-Yaniv, Ran Zilberstein, Rasoul Shafipour, Rich Harang, Rick Izzo, Rima Shahbazyan, Rishabh Garg, Ritika Borkar, Ritu Gala, Riyad Islam, Roger Waleffe, Rohit Watve, Roi Koren, Ruoxi Zhang, Russell J. Hewett, Ryan Prenger, Ryan Timbrook, Sadegh Mahdavi, Sahil Modi, Samuel Kriman, Sanjay Kariyappa, Sanjeev Satheesh, Saori Kaji, Satish Pasumarthi, Sean Narentharen, Sean Narenthiran, Seonmyeong Bak, Sergey Kashirsky, Seth Poulos, Shahar Mor, Shanmugam Ramasamy, Shantanu Acharya, Shaona Ghosh, Sharath Turuvekere Sreenivas, Shelby Thomas, Shiqing Fan, Shreya Gopal, Shrimai Prabhumoye, Shubham Pachori, Shubham Toshniwal, Shuoyang Ding, Siddharth Singh, Simeng Sun, Smita Ithape, Somshubra Majumdar, Soumye Singhal, Stefania Alborghetti, Stephen Ge, Sugam Dipak Devare, Sumeet Kumar Barua, Suseella Panguluri, Suyog Gupta, Sweta Priyadarshi, Syeda Nahida Akter, Tan Bui, Teodor-Dumitru Ene, Terry Kong, Thanh Do, Tijmen Blankevoort, Tom Balough, Tomer Asida, Tomer Bar Natan, Tugrul Konuk, Twinkle Vashishth, Udi Karpas, Ushnish De, Vahid Noorozi, Vahid Noroozi, Venkat Srinivasan, Venmugil Elango, Vijay Korthikanti, Vitaly Kurin, Vitaly Lavrukhin, Wanli Jiang, Wasi Uddin Ahmad, Wei Du, Wei Ping, Wenfei Zhou, Will Jennings, William Zhang, Wojciech Prazuch, Xiaowei Ren, Yashaswi Karnati, Yejin Choi, Yev Meyer, Yi-Fu Wu, Yian Zhang, Ying Lin, Yonatan Geifman, Yonggan Fu, Yoshi Subara, Yoshi Suhara, Yubo Gao, Zach Moshe, Zhen Dong, Zihan Liu, Zijia Chen, Zijie Yan

分类: cs.CL, cs.AI, cs.LG

发布日期: 2025-12-23

💡 一句话要点

提出Nemotron 3 Nano,一种高效的混合专家Mamba-Transformer模型,用于Agent推理。

🎯 匹配领域: 支柱二:RL算法与架构 (RL & Architecture)

关键词: 混合专家模型 Mamba架构 Transformer架构 高效推理 长上下文处理 Agent应用 语言模型

📋 核心要点

- 现有语言模型在推理和效率方面存在挑战,尤其是在处理长上下文时。

- Nemotron 3 Nano采用混合专家Mamba-Transformer架构,旨在提高推理效率和准确性。

- 实验表明,Nemotron 3 Nano在推理吞吐量和准确性方面优于同等规模的开源模型。

📝 摘要(中文)

本文介绍了Nemotron 3 Nano 30B-A3B,一种混合专家(Mixture-of-Experts)的混合Mamba-Transformer语言模型。Nemotron 3 Nano在25万亿文本tokens上进行了预训练,其中包括超过3万亿个Nemotron 2中没有的新tokens,随后在多样化的环境中进行了监督微调和大规模强化学习。Nemotron 3 Nano实现了比上一代Nemotron 2 Nano更高的准确率,同时每次前向传播激活的参数不到一半。与类似大小的开放模型(如GPT-OSS-20B和Qwen3-30B-A3B-Thinking-2507)相比,它实现了高达3.3倍的更高推理吞吐量,并且在流行的基准测试中也更准确。Nemotron 3 Nano展示了增强的agentic、推理和聊天能力,并支持高达1M tokens的上下文长度。我们发布了在Hugging Face上预训练的Nemotron 3 Nano 30B-A3B Base和经过后训练的Nemotron 3 Nano 30B-A3B checkpoints。

🔬 方法详解

问题定义:现有的大型语言模型在推理效率和处理长上下文方面面临挑战。传统的Transformer模型计算复杂度高,难以高效处理长序列。此外,如何在保持模型准确性的前提下,降低计算成本是一个关键问题。

核心思路:Nemotron 3 Nano的核心思路是结合Mamba和Transformer的优势,利用Mamba的高效序列建模能力和Transformer的全局上下文理解能力,构建一个混合专家模型。通过混合专家机制,模型可以在每次前向传播时只激活部分参数,从而降低计算成本,提高推理速度。

技术框架:Nemotron 3 Nano采用混合专家(MoE)架构,其中每个专家可以是Mamba或Transformer模块。整体流程包括:首先,输入文本经过embedding层转换为向量表示;然后,通过一个路由网络(routing network)决定激活哪些专家;激活的专家并行处理输入,并将结果传递给一个组合层(combination layer);最后,组合层将各个专家的输出进行加权组合,得到最终的输出。模型在25万亿tokens上进行预训练,并使用监督微调和强化学习进行优化。

关键创新:Nemotron 3 Nano的关键创新在于混合了Mamba和Transformer架构,并采用混合专家机制。Mamba架构擅长高效处理长序列,而Transformer架构擅长全局上下文理解。混合专家机制允许模型在每次推理时只激活部分参数,从而显著降低计算成本。

关键设计:模型包含30B参数,采用A3B配置(具体含义未知)。预训练数据包含25万亿tokens,其中包括3万亿个新tokens。模型使用监督微调和强化学习进行优化,具体损失函数和训练策略未知。上下文长度支持高达1M tokens。

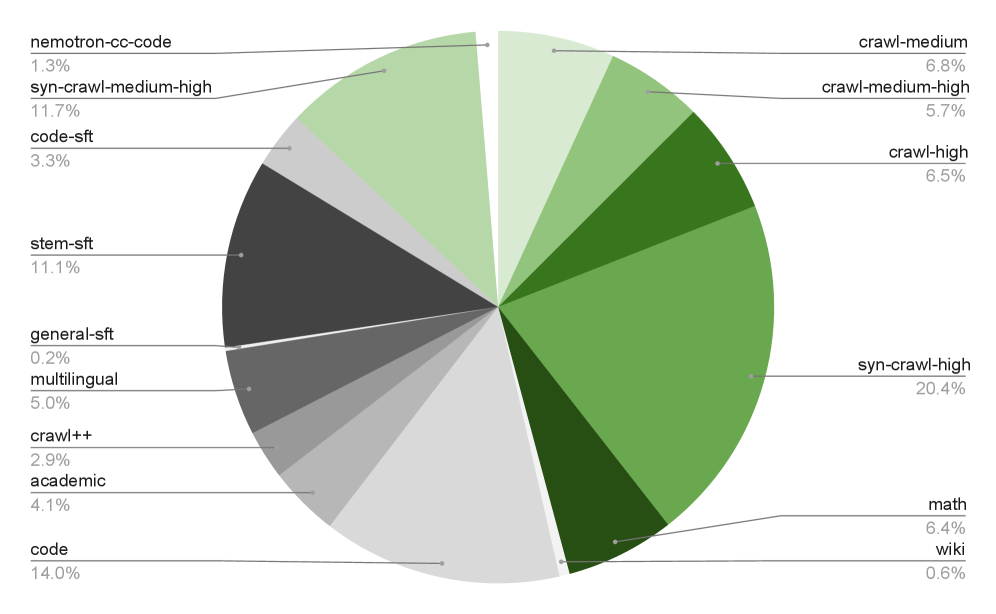

🖼️ 关键图片

📊 实验亮点

Nemotron 3 Nano在推理吞吐量上比GPT-OSS-20B和Qwen3-30B-A3B-Thinking-2507等同等规模的开源模型高出3.3倍,同时在常用基准测试中也表现出更高的准确性。该模型还支持高达1M tokens的上下文长度,使其能够处理更长的文档和对话。

🎯 应用场景

Nemotron 3 Nano可应用于各种需要高效推理和处理长上下文的场景,例如智能客服、对话系统、文档摘要、代码生成和Agent应用。其高效的推理能力和强大的上下文处理能力使其能够更好地理解用户意图,生成更准确、更相关的回复,从而提升用户体验。

📄 摘要(原文)

We present Nemotron 3 Nano 30B-A3B, a Mixture-of-Experts hybrid Mamba-Transformer language model. Nemotron 3 Nano was pretrained on 25 trillion text tokens, including more than 3 trillion new unique tokens over Nemotron 2, followed by supervised fine tuning and large-scale RL on diverse environments. Nemotron 3 Nano achieves better accuracy than our previous generation Nemotron 2 Nano while activating less than half of the parameters per forward pass. It achieves up to 3.3x higher inference throughput than similarly-sized open models like GPT-OSS-20B and Qwen3-30B-A3B-Thinking-2507, while also being more accurate on popular benchmarks. Nemotron 3 Nano demonstrates enhanced agentic, reasoning, and chat abilities and supports context lengths up to 1M tokens. We release both our pretrained Nemotron 3 Nano 30B-A3B Base and post-trained Nemotron 3 Nano 30B-A3B checkpoints on Hugging Face.