Geometric-Mean Policy Optimization

作者: Yuzhong Zhao, Yue Liu, Junpeng Liu, Jingye Chen, Xun Wu, Yaru Hao, Tengchao Lv, Shaohan Huang, Lei Cui, Qixiang Ye, Fang Wan, Furu Wei

分类: cs.CL

发布日期: 2025-07-28 (更新: 2025-10-18)

备注: Code is available at https://github.com/callsys/GMPO

🔗 代码/项目: GITHUB

💡 一句话要点

提出几何均值策略优化以解决GRPO不稳定问题

🎯 匹配领域: 支柱九:具身大模型 (Embodied Foundation Models)

关键词: 几何均值 策略优化 异常值处理 自然语言处理 机器学习

📋 核心要点

- 现有的GRPO方法在处理具有异常重要性加权奖励的令牌时,策略更新不稳定,影响模型性能。

- GMPO通过最大化令牌级奖励的几何均值,减少对异常值的敏感性,从而提高策略优化的稳定性。

- 实验结果显示,GMPO-7B在多个数学推理基准上相较于GRPO提升了最多4.1%的平均Pass@1,表现优于许多先进方法。

📝 摘要(中文)

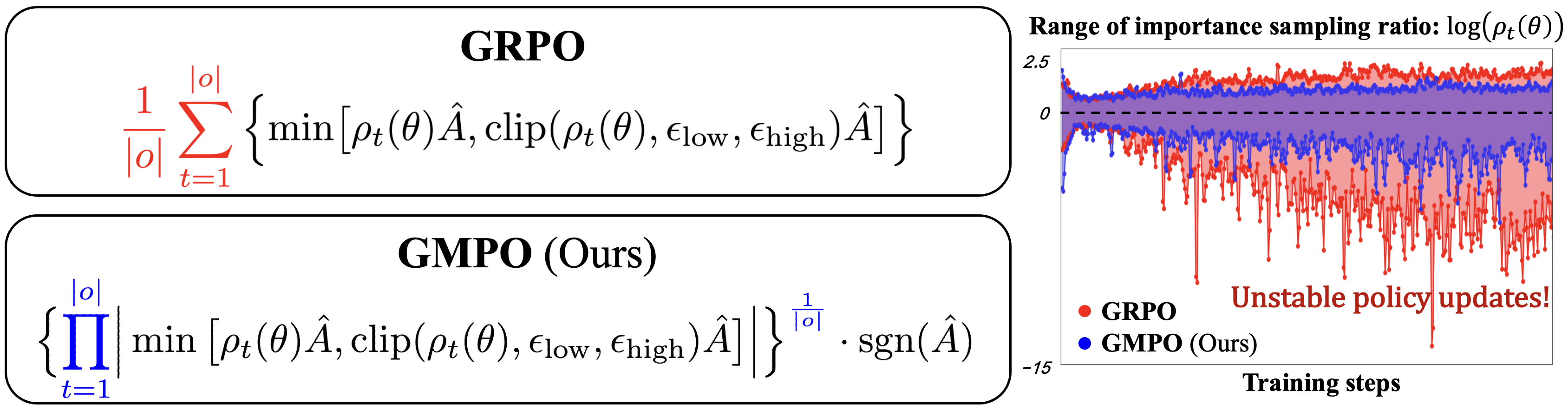

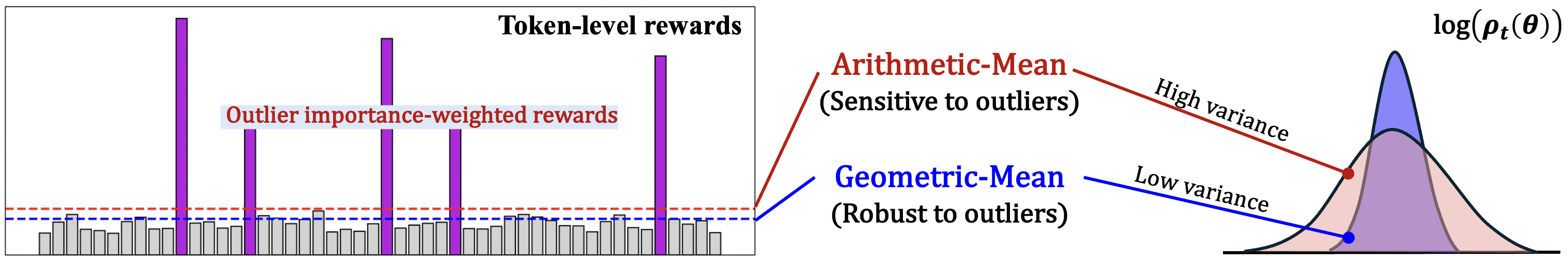

群体相对策略优化(GRPO)通过优化令牌级奖励的算术均值显著增强了大型语言模型的推理能力。然而,当面临具有异常重要性加权奖励的令牌时,GRPO会出现不稳定的策略更新。为此,本文提出几何均值策略优化(GMPO),旨在通过抑制令牌奖励的异常值来提高GRPO的稳定性。GMPO通过最大化令牌级奖励的几何均值,减少对异常值的敏感性,从而保持更稳定的重要性采样比率。实验表明,GMPO-7B在多个数学推理基准上将GRPO的平均Pass@1提高了最多4.1%。

🔬 方法详解

问题定义:本文旨在解决GRPO在处理异常重要性加权奖励时导致的策略更新不稳定问题。现有方法在面对极端重要性采样比率时,容易出现性能波动。

核心思路:GMPO的核心思想是通过优化令牌级奖励的几何均值来替代算术均值,从而降低对异常值的敏感性,保持更稳定的策略更新。

技术框架:GMPO的整体架构与GRPO相似,主要流程包括:首先计算每个令牌的奖励,然后通过几何均值进行聚合,最后基于此进行策略更新。

关键创新:GMPO的主要创新在于引入几何均值作为奖励聚合方式,这一设计使得模型在面对异常值时更加稳健,相较于GRPO具有更好的性能表现。

关键设计:在实现上,GMPO的损失函数与GRPO类似,但在计算奖励时采用几何均值,确保在训练过程中重要性采样比率的稳定性。

🖼️ 关键图片

📊 实验亮点

实验结果表明,GMPO-7B在多个数学推理基准上相较于GRPO的平均Pass@1提升了最多4.1%。这一结果不仅展示了GMPO的有效性,还超越了许多当前的先进方法,证明了其在实际应用中的潜力。

🎯 应用场景

该研究的潜在应用领域包括自然语言处理中的推理任务、对话系统以及其他需要处理不确定性和异常值的机器学习场景。GMPO的稳定性提升可能会在实际应用中显著提高模型的可靠性和性能,推动相关技术的发展。

📄 摘要(原文)

Group Relative Policy Optimization (GRPO) has significantly enhanced the reasoning capability of large language models by optimizing the arithmetic mean of token-level rewards. Unfortunately, GRPO is observed to suffer from unstable policy updates when facing tokens with outlier importance-weighted rewards, which manifest as extreme importance sampling ratios during training. In this study, we propose Geometric-Mean Policy Optimization (GMPO), with the aim to improve the stability of GRPO through suppressing token reward outliers. Instead of optimizing the arithmetic mean, GMPO maximizes the geometric mean of token-level rewards, which is inherently less sensitive to outliers and maintains a more stable range of importance sampling ratio. GMPO is plug-and-play-simply replacing GRPO's arithmetic mean with the geometric mean of token-level rewards, as the latter is inherently less sensitive to outliers. GMPO is theoretically plausible-analysis reveals that both GMPO and GRPO are weighted forms of the policy gradient while the former enjoys more stable weights, which consequently benefits policy optimization and performance. Experiments on multiple mathematical reasoning benchmarks show that GMPO-7B improves the average Pass@1 of GRPO by up to 4.1%, outperforming many state-of-the-art approaches. Code is available at https://github.com/callsys/GMPO.