Gemma 3 Technical Report

作者: Gemma Team, Aishwarya Kamath, Johan Ferret, Shreya Pathak, Nino Vieillard, Ramona Merhej, Sarah Perrin, Tatiana Matejovicova, Alexandre Ramé, Morgane Rivière, Louis Rouillard, Thomas Mesnard, Geoffrey Cideron, Jean-bastien Grill, Sabela Ramos, Edouard Yvinec, Michelle Casbon, Etienne Pot, Ivo Penchev, Gaël Liu, Francesco Visin, Kathleen Kenealy, Lucas Beyer, Xiaohai Zhai, Anton Tsitsulin, Robert Busa-Fekete, Alex Feng, Noveen Sachdeva, Benjamin Coleman, Yi Gao, Basil Mustafa, Iain Barr, Emilio Parisotto, David Tian, Matan Eyal, Colin Cherry, Jan-Thorsten Peter, Danila Sinopalnikov, Surya Bhupatiraju, Rishabh Agarwal, Mehran Kazemi, Dan Malkin, Ravin Kumar, David Vilar, Idan Brusilovsky, Jiaming Luo, Andreas Steiner, Abe Friesen, Abhanshu Sharma, Abheesht Sharma, Adi Mayrav Gilady, Adrian Goedeckemeyer, Alaa Saade, Alex Feng, Alexander Kolesnikov, Alexei Bendebury, Alvin Abdagic, Amit Vadi, András György, André Susano Pinto, Anil Das, Ankur Bapna, Antoine Miech, Antoine Yang, Antonia Paterson, Ashish Shenoy, Ayan Chakrabarti, Bilal Piot, Bo Wu, Bobak Shahriari, Bryce Petrini, Charlie Chen, Charline Le Lan, Christopher A. Choquette-Choo, CJ Carey, Cormac Brick, Daniel Deutsch, Danielle Eisenbud, Dee Cattle, Derek Cheng, Dimitris Paparas, Divyashree Shivakumar Sreepathihalli, Doug Reid, Dustin Tran, Dustin Zelle, Eric Noland, Erwin Huizenga, Eugene Kharitonov, Frederick Liu, Gagik Amirkhanyan, Glenn Cameron, Hadi Hashemi, Hanna Klimczak-Plucińska, Harman Singh, Harsh Mehta, Harshal Tushar Lehri, Hussein Hazimeh, Ian Ballantyne, Idan Szpektor, Ivan Nardini, Jean Pouget-Abadie, Jetha Chan, Joe Stanton, John Wieting, Jonathan Lai, Jordi Orbay, Joseph Fernandez, Josh Newlan, Ju-yeong Ji, Jyotinder Singh, Kat Black, Kathy Yu, Kevin Hui, Kiran Vodrahalli, Klaus Greff, Linhai Qiu, Marcella Valentine, Marina Coelho, Marvin Ritter, Matt Hoffman, Matthew Watson, Mayank Chaturvedi, Michael Moynihan, Min Ma, Nabila Babar, Natasha Noy, Nathan Byrd, Nick Roy, Nikola Momchev, Nilay Chauhan, Noveen Sachdeva, Oskar Bunyan, Pankil Botarda, Paul Caron, Paul Kishan Rubenstein, Phil Culliton, Philipp Schmid, Pier Giuseppe Sessa, Pingmei Xu, Piotr Stanczyk, Pouya Tafti, Rakesh Shivanna, Renjie Wu, Renke Pan, Reza Rokni, Rob Willoughby, Rohith Vallu, Ryan Mullins, Sammy Jerome, Sara Smoot, Sertan Girgin, Shariq Iqbal, Shashir Reddy, Shruti Sheth, Siim Põder, Sijal Bhatnagar, Sindhu Raghuram Panyam, Sivan Eiger, Susan Zhang, Tianqi Liu, Trevor Yacovone, Tyler Liechty, Uday Kalra, Utku Evci, Vedant Misra, Vincent Roseberry, Vlad Feinberg, Vlad Kolesnikov, Woohyun Han, Woosuk Kwon, Xi Chen, Yinlam Chow, Yuvein Zhu, Zichuan Wei, Zoltan Egyed, Victor Cotruta, Minh Giang, Phoebe Kirk, Anand Rao, Kat Black, Nabila Babar, Jessica Lo, Erica Moreira, Luiz Gustavo Martins, Omar Sanseviero, Lucas Gonzalez, Zach Gleicher, Tris Warkentin, Vahab Mirrokni, Evan Senter, Eli Collins, Joelle Barral, Zoubin Ghahramani, Raia Hadsell, Yossi Matias, D. Sculley, Slav Petrov, Noah Fiedel, Noam Shazeer, Oriol Vinyals, Jeff Dean, Demis Hassabis, Koray Kavukcuoglu, Clement Farabet, Elena Buchatskaya, Jean-Baptiste Alayrac, Rohan Anil, Dmitry, Lepikhin, Sebastian Borgeaud, Olivier Bachem, Armand Joulin, Alek Andreev, Cassidy Hardin, Robert Dadashi, Léonard Hussenot

分类: cs.CL, cs.AI

发布日期: 2025-03-25

💡 一句话要点

Gemma 3:推出多模态轻量级开放模型,支持视觉理解、长上下文和多语言

🎯 匹配领域: 支柱二:RL算法与架构 (RL & Architecture) 支柱九:具身大模型 (Embodied Foundation Models)

关键词: 多模态模型 长上下文 轻量级模型 蒸馏训练 指令微调 视觉理解 开放模型

📋 核心要点

- 现有轻量级开放模型在处理长上下文、多模态输入和复杂任务(如数学推理)时存在性能瓶颈,且KV-cache内存消耗大。

- Gemma 3通过引入视觉理解能力、扩展语言覆盖范围和支持更长上下文(128K tokens)来增强模型能力,并采用新的架构来减少KV-cache内存。

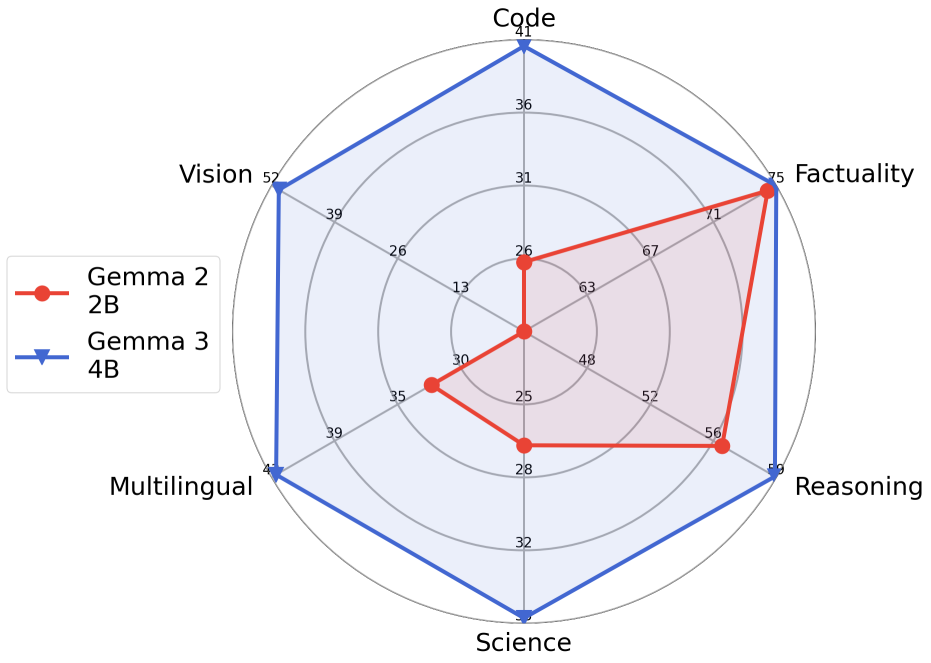

- 实验结果表明,Gemma 3在预训练和指令微调版本中均优于Gemma 2,并且Gemma3-4B-IT在某些任务上可与Gemma2-27B-IT竞争。

📝 摘要(中文)

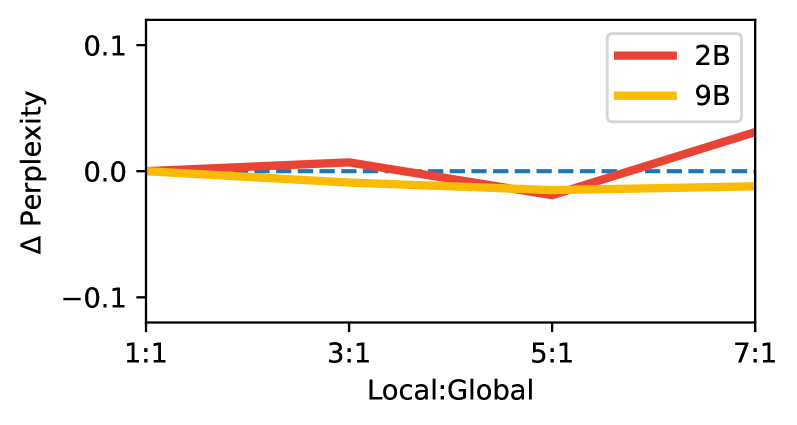

我们介绍了Gemma 3,它是Gemma系列轻量级开放模型的多模态扩展,模型规模从10亿到270亿参数不等。此版本引入了视觉理解能力,更广泛的语言覆盖范围和更长的上下文(至少128K tokens)。我们还改变了模型的架构,以减少KV-cache内存,这种内存往往会随着长上下文而爆炸。这是通过增加局部注意力层与全局注意力层的比例,并保持局部注意力的跨度较短来实现的。Gemma 3模型通过蒸馏进行训练,并且在预训练和指令微调版本中都实现了优于Gemma 2的性能。特别地,我们新颖的后训练方法显著提高了数学、聊天、指令遵循和多语言能力,使得Gemma3-4B-IT能够与Gemma2-27B-IT竞争,并且Gemma3-27B-IT在各个基准测试中与Gemini-1.5-Pro相当。我们将所有模型发布给社区。

🔬 方法详解

问题定义:现有的大语言模型在处理长文本时,KV-cache的内存消耗会显著增加,限制了模型能够处理的上下文长度。此外,对于轻量级模型而言,如何在有限的参数量下实现多模态理解(例如视觉理解)以及在复杂任务(如数学推理)上达到高性能是一个挑战。

核心思路:Gemma 3的核心思路是通过架构改进和训练策略优化,在保持模型轻量化的同时,提升其处理长上下文、多模态输入以及复杂任务的能力。具体而言,通过调整局部注意力和全局注意力的比例来减少KV-cache内存,并采用蒸馏和后训练方法来提升模型性能。

技术框架:Gemma 3的整体架构在Transformer的基础上进行了改进,主要包括以下几个方面:1) 引入视觉理解能力,使其能够处理图像输入;2) 增加局部注意力层与全局注意力层的比例,并限制局部注意力的跨度,以减少KV-cache内存;3) 采用蒸馏训练方法,将大型模型的知识迁移到小型模型;4) 使用新颖的后训练方法,进一步提升模型在数学、聊天、指令遵循和多语言方面的能力。

关键创新:Gemma 3的关键创新在于其架构设计和训练策略的结合。通过调整局部注意力和全局注意力的比例,有效地降低了KV-cache内存消耗,从而支持更长的上下文。此外,新颖的后训练方法显著提升了模型在特定任务上的性能,使其在轻量级模型中具有竞争力。

关键设计:论文中提到,通过增加局部注意力层与全局注意力层的比例,并保持局部注意力的跨度较短,来减少KV-cache内存。具体的参数设置和损失函数等技术细节在论文中没有详细展开,属于未知信息。后训练方法的具体细节也未完全公开,属于未知信息。

🖼️ 关键图片

📊 实验亮点

Gemma 3在多个基准测试中表现出色。Gemma3-4B-IT在数学、聊天、指令遵循和多语言能力上与Gemma2-27B-IT竞争。Gemma3-27B-IT在各个基准测试中与Gemini-1.5-Pro相当。这些结果表明,Gemma 3在性能上取得了显著提升,并在轻量级模型中具有竞争力。

🎯 应用场景

Gemma 3具有广泛的应用前景,包括智能助手、聊天机器人、多模态内容理解、机器翻译、教育辅导等领域。其轻量级的特性使其能够在资源受限的设备上部署,例如移动设备和嵌入式系统。Gemma 3的发布将促进开源社区的发展,并推动人工智能技术的普及。

📄 摘要(原文)

We introduce Gemma 3, a multimodal addition to the Gemma family of lightweight open models, ranging in scale from 1 to 27 billion parameters. This version introduces vision understanding abilities, a wider coverage of languages and longer context - at least 128K tokens. We also change the architecture of the model to reduce the KV-cache memory that tends to explode with long context. This is achieved by increasing the ratio of local to global attention layers, and keeping the span on local attention short. The Gemma 3 models are trained with distillation and achieve superior performance to Gemma 2 for both pre-trained and instruction finetuned versions. In particular, our novel post-training recipe significantly improves the math, chat, instruction-following and multilingual abilities, making Gemma3-4B-IT competitive with Gemma2-27B-IT and Gemma3-27B-IT comparable to Gemini-1.5-Pro across benchmarks. We release all our models to the community.