BAP v2: An Enhanced Task Framework for Instruction Following in Minecraft Dialogues

作者: Prashant Jayannavar, Liliang Ren, Marisa Hudspeth, Risham Sidhu, Charlotte Lambert, Ariel Cordes, Elizabeth Kaplan, Anjali Narayan-Chen, Julia Hockenmaier

分类: cs.CL, cs.AI

发布日期: 2025-01-18 (更新: 2025-09-23)

备注: major revision; few examples of changes: added contemporary LLMs and new SOTA model, improved readability, expanded related work, etc

💡 一句话要点

提出BAP v2以解决Minecraft对话中的指令跟随问题

🎯 匹配领域: 支柱九:具身大模型 (Embodied Foundation Models)

关键词: Minecraft 指令跟随 建筑者动作预测 空间推理 合成数据 多模态学习 人工智能

📋 核心要点

- 现有方法在Minecraft对话中面临评估标准不清晰、训练数据稀缺和模型性能瓶颈等挑战。

- 论文提出BAP v2,通过增强评估基准和生成合成数据来提升模型的空间推理能力。

- 实验结果表明,新的SOTA模型Llama-CRAFTS在BAP v2任务上取得了53.0的F1得分,相较于之前的工作提升了6分。

📝 摘要(中文)

开发能够理解语言、感知环境并在物理世界中行动的交互代理是人工智能研究的长期目标。Minecraft协作建筑任务(MCBT)为实现这一目标提供了丰富的平台。本文聚焦于建筑者动作预测(BAP)子任务,提出BAP v2以应对评估、训练数据和建模方面的关键挑战。我们定义了一个增强的评估基准,生成不同类型的合成MCBT数据,并引入新的SOTA模型Llama-CRAFTS,显著提高了模型在BAP v2任务上的表现,F1得分达53.0,展示了当前文本模型在具身任务中的空间能力。

🔬 方法详解

问题定义:本文旨在解决Minecraft对话中建筑者动作预测(BAP)任务的评估和数据稀缺问题。现有方法在处理复杂的多模态环境时,表现出空间推理能力不足,且训练数据有限,导致模型性能不佳。

核心思路:论文通过引入BAP v2,重新审视任务的评估标准,生成合成数据以增强模型的空间技能,从而提升模型在实际任务中的表现。

技术框架:整体架构包括三个主要模块:1) 增强评估基准,提供更清晰的测试集和评估指标;2) 合成数据生成,帮助模型学习基本的空间技能;3) 新的模型架构Llama-CRAFTS,利用更丰富的输入表示。

关键创新:最重要的创新在于提出了BAP v2评估框架和合成数据生成方法,这些方法有效解决了现有模型在空间推理上的瓶颈,与传统方法相比,提供了更全面的性能评估。

关键设计:在模型设计中,Llama-CRAFTS采用了更复杂的输入表示,优化了损失函数以适应多模态数据,确保模型能够更好地理解和执行指令。

🖼️ 关键图片

📊 实验亮点

实验结果显示,Llama-CRAFTS在BAP v2任务上取得了53.0的F1得分,相较于之前的工作提升了6分。这一结果不仅展示了模型在合成数据上的强大表现,也揭示了当前文本模型在空间推理方面的挑战。

🎯 应用场景

该研究的潜在应用领域包括教育、游戏开发和人机交互等。通过提升模型在复杂环境中的指令跟随能力,可以为开发更智能的虚拟助手和交互式学习工具奠定基础,未来可能推动具身人工智能的进一步发展。

📄 摘要(原文)

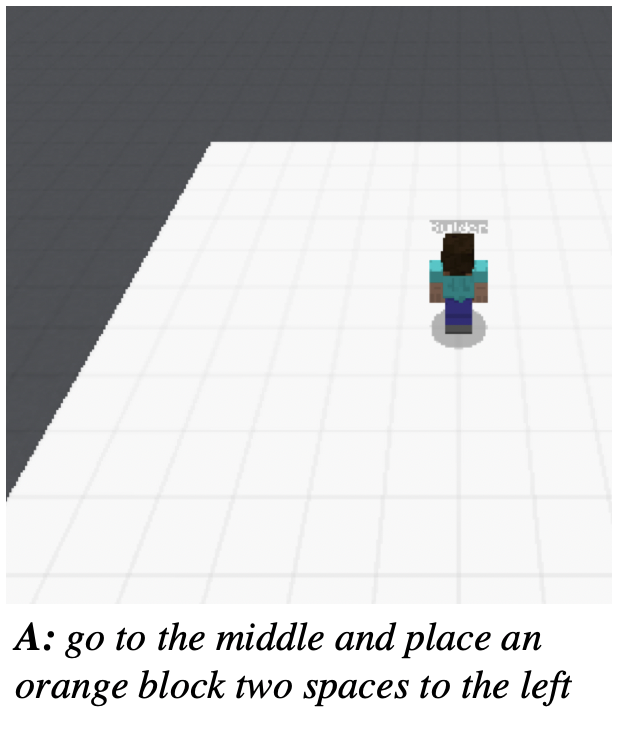

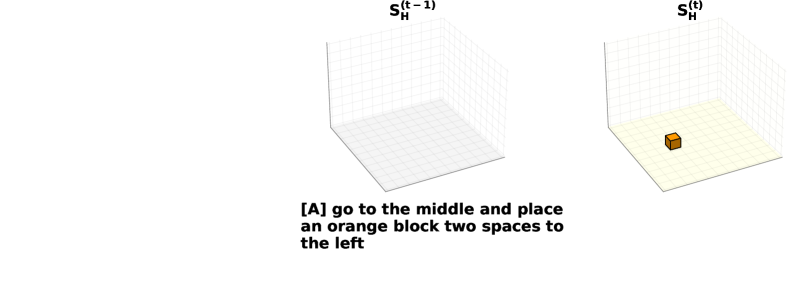

Developing interactive agents that can understand language, perceive their surroundings, and act within the physical world is a long-standing goal of AI research. The Minecraft Collaborative Building Task (MCBT) (Narayan-Chen, Jayannavar, and Hockenmaier 2019), a two-player game in which an Architect (A) instructs a Builder (B) to construct a target structure in a simulated 3D Blocks World environment, offers a rich platform to work towards this goal. In this work, we focus on the Builder Action Prediction (BAP) subtask: predicting B's actions in a multimodal game context (Jayannavar, Narayan-Chen, and Hockenmaier 2020) - a challenging testbed for grounded instruction following, with limited training data. We holistically re-examine this task and introduce BAP v2 to address key challenges in evaluation, training data, and modeling. Specifically, we define an enhanced evaluation benchmark, featuring a cleaner test set and fairer, more insightful metrics that also reveal spatial reasoning as the primary performance bottleneck. To address data scarcity and to teach models basic spatial skills, we generate different types of synthetic MCBT data. We observe that current, LLM-based SOTA models trained on the human BAP dialogues fail on these simpler, synthetic BAP ones, but show that training models on this synthetic data improves their performance across the board. We also introduce a new SOTA model, Llama-CRAFTS, which leverages richer input representations, and achieves an F1 score of 53.0 on the BAP v2 task and strong performance on the synthetic data. While this result marks a notable 6 points improvement over previous work, it also underscores the task's remaining difficulty, establishing BAP v2 as a fertile ground for future research, and providing a useful measure of the spatial capabilities of current text-only LLMs in such embodied tasks.