LogicGuard: Improving Embodied LLM agents through Temporal Logic based Critics

作者: Anand Gokhale, Vaibhav Srivastava, Francesco Bullo

分类: cs.AI, cs.CL, cs.LG, eess.SY

发布日期: 2025-07-04 (更新: 2025-09-23)

备注: Modified version of prior LTLCrit work with new robotics dataset

💡 一句话要点

提出LogicGuard以解决长时间序列规划中的决策不可靠问题

🎯 匹配领域: 支柱九:具身大模型 (Embodied Foundation Models)

关键词: 大型语言模型 时序逻辑 演员-评论家架构 长时间序列规划 决策安全性 智能体监督 机器人技术

📋 核心要点

- 现有的LLM在长时间序列规划任务中容易出现错误累积,导致决策不可靠和效率低下。

- LogicGuard通过引入基于LTL的评论家,指导LLM演员进行更安全和高效的决策,结合了语言模型的推理能力与形式逻辑的保障。

- 在家庭任务基准测试中,LogicGuard的任务完成率提高了25%,在Minecraft任务中也显著提升了效率和安全性。

📝 摘要(中文)

大型语言模型(LLMs)在零-shot和单步推理及决策问题上表现出色,但在长时间序列规划任务中,其错误会累积,导致不可靠或低效的行为。本文提出LogicGuard,一种模块化的演员-评论家架构,其中LLM演员由基于线性时序逻辑(LTL)的轨迹级LLM评论家引导。该方法结合了语言模型的推理能力与形式逻辑的保障,能够分析完整轨迹并提出新的LTL约束,从而保护演员免受未来不安全或低效行为的影响。实验结果表明,LogicGuard在家庭任务的完成率上提高了25%,并在Minecraft的长时间任务中提升了效率和安全性。

🔬 方法详解

问题定义:本文旨在解决大型语言模型在长时间序列规划任务中出现的错误累积问题,导致决策不可靠和低效的挑战。现有方法在处理复杂任务时缺乏有效的监督和约束机制。

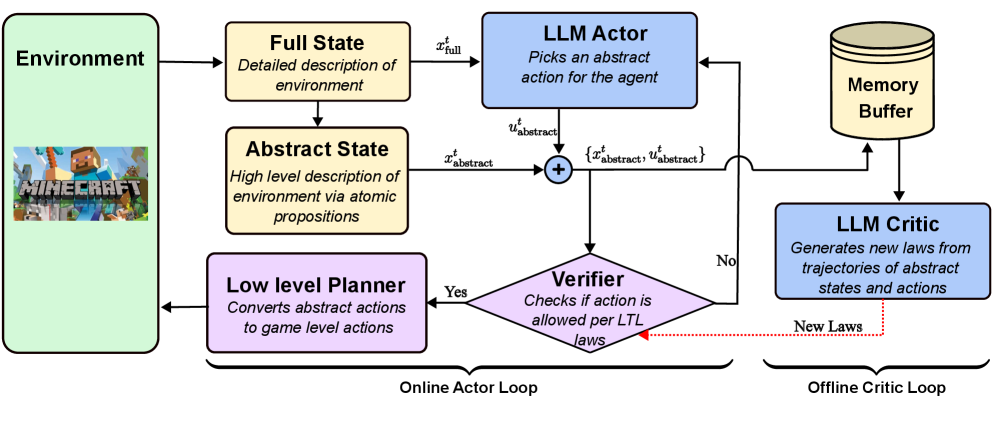

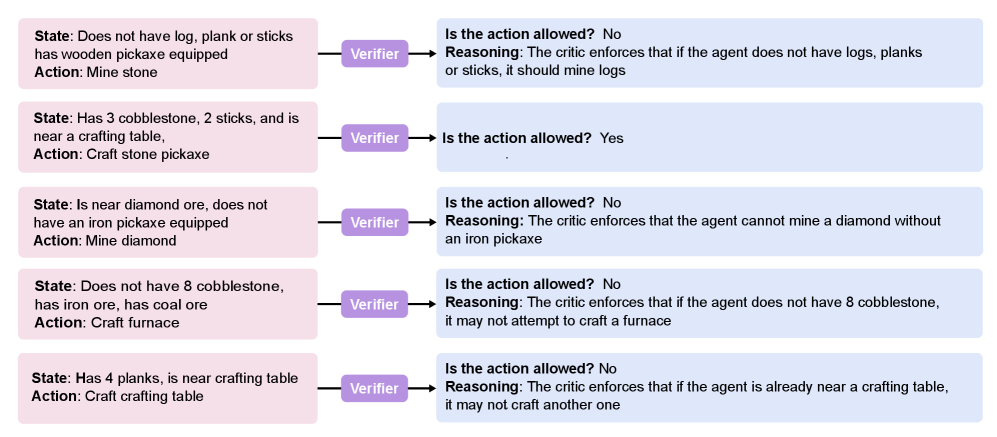

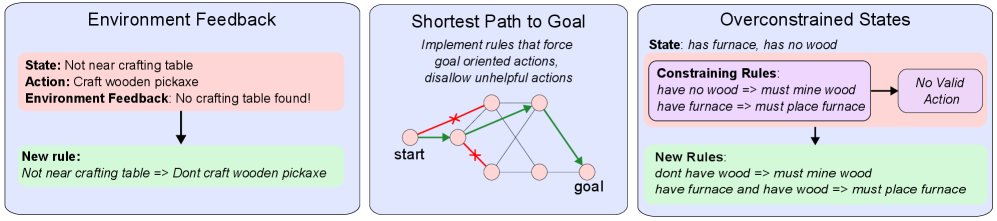

核心思路:LogicGuard的核心思路是通过引入一个基于线性时序逻辑(LTL)的评论家来指导LLM演员,从而在决策过程中提供安全性和效率保障。该设计使得演员能够在自然语言观察中选择高层次动作,同时评论家分析完整轨迹并提出新的约束。

技术框架:LogicGuard的整体架构包括两个主要模块:LLM演员和LTL评论家。演员负责从观察中选择动作,而评论家则分析轨迹并生成新的LTL约束,以防止未来的不安全行为。该框架是模型无关的,任何基于LLM的规划器都可以作为演员使用。

关键创新:最重要的创新在于将LTL引入到LLM的决策过程中,使得不同的LLM能够相互监督,从而提高决策的可靠性和安全性。这一方法与传统的单一模型决策方式有本质区别。

关键设计:在设计中,LogicGuard支持固定的安全规则和自适应学习的约束,能够根据分析的失败或次优轨迹生成新的时序逻辑规则,提升未来的决策表现。

🖼️ 关键图片

📊 实验亮点

在家庭任务的Behavior基准测试中,LogicGuard的任务完成率较基线InnerMonologue规划器提高了25%。在Minecraft的钻石采矿任务中,LogicGuard在效率和安全性方面均优于SayCan和InnerMonologue,显示出其在复杂任务中的优势。

🎯 应用场景

LogicGuard的研究成果在多个领域具有潜在应用价值,尤其是在需要长时间序列决策的机器人、自动驾驶和智能家居等场景中。通过提高决策的安全性和效率,LogicGuard能够为这些领域的智能体提供更可靠的支持,推动智能系统的进一步发展。

📄 摘要(原文)

Large language models (LLMs) have shown promise in zero-shot and single step reasoning and decision making problems, but in long horizon sequential planning tasks, their errors compound, often leading to unreliable or inefficient behavior. We introduce LogicGuard, a modular actor-critic architecture in which an LLM actor is guided by a trajectory level LLM critic that communicates through Linear Temporal Logic (LTL). Our setup combines the reasoning strengths of language models with the guarantees of formal logic. The actor selects high-level actions from natural language observations, while the critic analyzes full trajectories and proposes new LTL constraints that shield the actor from future unsafe or inefficient behavior. LogicGuard supports both fixed safety rules and adaptive, learned constraints, and is model-agnostic: any LLM-based planner can serve as the actor, with LogicGuard acting as a logic-generating wrapper. We formalize planning as graph traversal under symbolic constraints, allowing LogicGuard to analyze failed or suboptimal trajectories and generate new temporal logic rules that improve future behavior. To demonstrate generality, we evaluate LogicGuard across two distinct settings: short-horizon general tasks and long-horizon specialist tasks. On the Behavior benchmark of 100 household tasks, LogicGuard increases task completion rates by 25% over a baseline InnerMonologue planner. On the Minecraft diamond-mining task, which is long-horizon and requires multiple interdependent subgoals, LogicGuard improves both efficiency and safety compared to SayCan and InnerMonologue. These results show that enabling LLMs to supervise each other through temporal logic yields more reliable, efficient and safe decision-making for both embodied agents.