Towards Robust and Secure Embodied AI: A Survey on Vulnerabilities and Attacks

作者: Wenpeng Xing, Minghao Li, Mohan Li, Meng Han

分类: cs.CR, cs.AI, cs.RO

发布日期: 2025-02-18 (更新: 2025-02-25)

💡 一句话要点

提出统一框架以解决具身人工智能的安全与脆弱性问题

🎯 匹配领域: 支柱一:机器人控制 (Robot Control) 支柱九:具身大模型 (Embodied Foundation Models)

关键词: 具身人工智能 安全性 脆弱性 对抗性攻击 鲁棒性 系统分析 智能系统

📋 核心要点

- 现有研究多集中于一般人工智能的脆弱性,缺乏针对具身人工智能的专门框架,导致安全与可靠性问题未得到充分解决。

- 本文通过分类具身人工智能的脆弱性,分析对抗性攻击,并提出增强安全性的策略,构建了一个统一的理解框架。

- 通过系统分析和分类,本文为具身人工智能的安全性提供了新的视角和解决方案,推动了该领域的研究进展。

📝 摘要(中文)

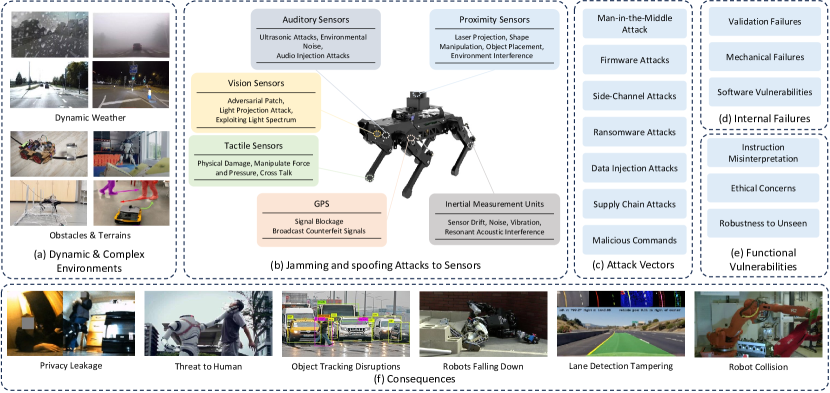

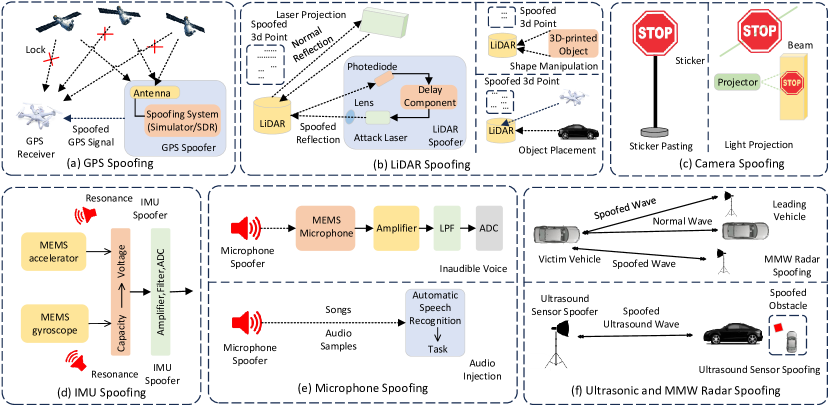

具身人工智能系统(如机器人和自动驾驶汽车)在实际应用中面临多种脆弱性,这些脆弱性源于环境和系统层面的因素,包括传感器欺骗、对抗性攻击以及任务与运动规划的失败。尽管已有研究不断增加,但现有综述很少专门关注具身人工智能系统的独特安全与安全挑战。本文通过分类具身人工智能特有的脆弱性、系统分析对抗性攻击范式、研究针对大型视觉-语言模型和大型语言模型的攻击向量、评估算法的鲁棒性挑战,并提出增强具身人工智能系统安全性和可靠性的策略,填补了这一关键空白。通过整合这些维度,本文提供了一个全面的框架,以理解具身人工智能中脆弱性与安全之间的相互作用。

🔬 方法详解

问题定义:本文旨在解决具身人工智能系统在实际应用中面临的多种脆弱性与安全挑战,现有方法往往缺乏针对性和系统性,无法有效应对这些问题。

核心思路:通过对具身人工智能特有的脆弱性进行分类,并系统分析对抗性攻击的影响,提出针对性的安全增强策略,以提升具身人工智能的鲁棒性和安全性。

技术框架:本文构建了一个综合框架,包含脆弱性分类、对抗性攻击分析、攻击向量研究、鲁棒性评估和安全策略提出等主要模块,形成一个系统的研究流程。

关键创新:最重要的创新在于提出了针对具身人工智能的脆弱性和安全挑战的统一框架,填补了现有研究的空白,强调了环境与系统层面因素的交互作用。

关键设计:在脆弱性分类中,本文将其分为外源性(如物理攻击、网络安全威胁)和内源性(如传感器故障、软件缺陷)两大类,并在对抗性攻击分析中,重点关注其对感知、决策和具身交互的影响。

🖼️ 关键图片

📊 实验亮点

本文通过系统分析具身人工智能的脆弱性与攻击方式,提出的框架为理解安全性提供了新的视角,显著提升了对抗性攻击的识别与应对能力,预计将推动该领域的进一步研究与应用。

🎯 应用场景

该研究的潜在应用领域包括机器人技术、自动驾驶汽车、智能家居等具身人工智能系统。通过增强这些系统的安全性和可靠性,可以有效降低在复杂环境中运行时的风险,提升用户信任度,推动智能技术的广泛应用。

📄 摘要(原文)

Embodied AI systems, including robots and autonomous vehicles, are increasingly integrated into real-world applications, where they encounter a range of vulnerabilities stemming from both environmental and system-level factors. These vulnerabilities manifest through sensor spoofing, adversarial attacks, and failures in task and motion planning, posing significant challenges to robustness and safety. Despite the growing body of research, existing reviews rarely focus specifically on the unique safety and security challenges of embodied AI systems. Most prior work either addresses general AI vulnerabilities or focuses on isolated aspects, lacking a dedicated and unified framework tailored to embodied AI. This survey fills this critical gap by: (1) categorizing vulnerabilities specific to embodied AI into exogenous (e.g., physical attacks, cybersecurity threats) and endogenous (e.g., sensor failures, software flaws) origins; (2) systematically analyzing adversarial attack paradigms unique to embodied AI, with a focus on their impact on perception, decision-making, and embodied interaction; (3) investigating attack vectors targeting large vision-language models (LVLMs) and large language models (LLMs) within embodied systems, such as jailbreak attacks and instruction misinterpretation; (4) evaluating robustness challenges in algorithms for embodied perception, decision-making, and task planning; and (5) proposing targeted strategies to enhance the safety and reliability of embodied AI systems. By integrating these dimensions, we provide a comprehensive framework for understanding the interplay between vulnerabilities and safety in embodied AI.