OpenAI o1 System Card

作者: OpenAI, :, Aaron Jaech, Adam Kalai, Adam Lerer, Adam Richardson, Ahmed El-Kishky, Aiden Low, Alec Helyar, Aleksander Madry, Alex Beutel, Alex Carney, Alex Iftimie, Alex Karpenko, Alex Tachard Passos, Alexander Neitz, Alexander Prokofiev, Alexander Wei, Allison Tam, Ally Bennett, Ananya Kumar, Andre Saraiva, Andrea Vallone, Andrew Duberstein, Andrew Kondrich, Andrey Mishchenko, Andy Applebaum, Angela Jiang, Ashvin Nair, Barret Zoph, Behrooz Ghorbani, Ben Rossen, Benjamin Sokolowsky, Boaz Barak, Bob McGrew, Borys Minaiev, Botao Hao, Bowen Baker, Brandon Houghton, Brandon McKinzie, Brydon Eastman, Camillo Lugaresi, Cary Bassin, Cary Hudson, Chak Ming Li, Charles de Bourcy, Chelsea Voss, Chen Shen, Chong Zhang, Chris Koch, Chris Orsinger, Christopher Hesse, Claudia Fischer, Clive Chan, Dan Roberts, Daniel Kappler, Daniel Levy, Daniel Selsam, David Dohan, David Farhi, David Mely, David Robinson, Dimitris Tsipras, Doug Li, Dragos Oprica, Eben Freeman, Eddie Zhang, Edmund Wong, Elizabeth Proehl, Enoch Cheung, Eric Mitchell, Eric Wallace, Erik Ritter, Evan Mays, Fan Wang, Felipe Petroski Such, Filippo Raso, Florencia Leoni, Foivos Tsimpourlas, Francis Song, Fred von Lohmann, Freddie Sulit, Geoff Salmon, Giambattista Parascandolo, Gildas Chabot, Grace Zhao, Greg Brockman, Guillaume Leclerc, Hadi Salman, Haiming Bao, Hao Sheng, Hart Andrin, Hessam Bagherinezhad, Hongyu Ren, Hunter Lightman, Hyung Won Chung, Ian Kivlichan, Ian O'Connell, Ian Osband, Ignasi Clavera Gilaberte, Ilge Akkaya, Ilya Kostrikov, Ilya Sutskever, Irina Kofman, Jakub Pachocki, James Lennon, Jason Wei, Jean Harb, Jerry Twore, Jiacheng Feng, Jiahui Yu, Jiayi Weng, Jie Tang, Jieqi Yu, Joaquin Quiñonero Candela, Joe Palermo, Joel Parish, Johannes Heidecke, John Hallman, John Rizzo, Jonathan Gordon, Jonathan Uesato, Jonathan Ward, Joost Huizinga, Julie Wang, Kai Chen, Kai Xiao, Karan Singhal, Karina Nguyen, Karl Cobbe, Katy Shi, Kayla Wood, Kendra Rimbach, Keren Gu-Lemberg, Kevin Liu, Kevin Lu, Kevin Stone, Kevin Yu, Lama Ahmad, Lauren Yang, Leo Liu, Leon Maksin, Leyton Ho, Liam Fedus, Lilian Weng, Linden Li, Lindsay McCallum, Lindsey Held, Lorenz Kuhn, Lukas Kondraciuk, Lukasz Kaiser, Luke Metz, Madelaine Boyd, Maja Trebacz, Manas Joglekar, Mark Chen, Marko Tintor, Mason Meyer, Matt Jones, Matt Kaufer, Max Schwarzer, Meghan Shah, Mehmet Yatbaz, Melody Y. Guan, Mengyuan Xu, Mengyuan Yan, Mia Glaese, Mianna Chen, Michael Lampe, Michael Malek, Michele Wang, Michelle Fradin, Mike McClay, Mikhail Pavlov, Miles Wang, Mingxuan Wang, Mira Murati, Mo Bavarian, Mostafa Rohaninejad, Nat McAleese, Neil Chowdhury, Neil Chowdhury, Nick Ryder, Nikolas Tezak, Noam Brown, Ofir Nachum, Oleg Boiko, Oleg Murk, Olivia Watkins, Patrick Chao, Paul Ashbourne, Pavel Izmailov, Peter Zhokhov, Rachel Dias, Rahul Arora, Randall Lin, Rapha Gontijo Lopes, Raz Gaon, Reah Miyara, Reimar Leike, Renny Hwang, Rhythm Garg, Robin Brown, Roshan James, Rui Shu, Ryan Cheu, Ryan Greene, Saachi Jain, Sam Altman, Sam Toizer, Sam Toyer, Samuel Miserendino, Sandhini Agarwal, Santiago Hernandez, Sasha Baker, Scott McKinney, Scottie Yan, Shengjia Zhao, Shengli Hu, Shibani Santurkar, Shraman Ray Chaudhuri, Shuyuan Zhang, Siyuan Fu, Spencer Papay, Steph Lin, Suchir Balaji, Suvansh Sanjeev, Szymon Sidor, Tal Broda, Aidan Clark, Tao Wang, Taylor Gordon, Ted Sanders, Tejal Patwardhan, Thibault Sottiaux, Thomas Degry, Thomas Dimson, Tianhao Zheng, Timur Garipov, Tom Stasi, Trapit Bansal, Trevor Creech, Troy Peterson, Tyna Eloundou, Valerie Qi, Vineet Kosaraju, Vinnie Monaco, Vitchyr Pong, Vlad Fomenko, Weiyi Zheng, Wenda Zhou, Wes McCabe, Wojciech Zaremba, Yann Dubois, Yinghai Lu, Yining Chen, Young Cha, Yu Bai, Yuchen He, Yuchen Zhang, Yunyun Wang, Zheng Shao, Zhuohan Li

分类: cs.AI

发布日期: 2024-12-21

💡 一句话要点

OpenAI o1系列模型:利用大规模强化学习和思维链推理提升安全性和鲁棒性

🎯 匹配领域: 支柱二:RL算法与架构 (RL & Architecture) 支柱九:具身大模型 (Embodied Foundation Models)

关键词: 强化学习 思维链推理 安全性对齐 大语言模型 红队测试

📋 核心要点

- 现有模型在处理安全性问题时,缺乏有效的推理能力,容易产生不安全或不恰当的回复。

- o1系列模型通过大规模强化学习训练,并结合思维链推理,使模型能够更好地理解和应用安全策略。

- 实验表明,o1模型在防范非法建议、刻板印象和越狱攻击等风险方面表现出色,显著提升了模型的安全性。

📝 摘要(中文)

o1模型系列通过大规模强化学习进行训练,利用思维链进行推理。这种先进的推理能力为提高模型的安全性和鲁棒性提供了新的途径。特别地,我们的模型可以通过审慎的对齐,在响应潜在不安全提示时,推理关于我们的安全策略。这在某些风险基准测试中实现了最先进的性能,例如生成非法建议、选择刻板印象的响应以及屈服于已知的越狱攻击。在回答之前训练模型以结合思维链具有释放巨大潜力的同时,也增加了源于更高智能的潜在风险。我们的结果强调了构建稳健对齐方法、广泛压力测试其有效性以及维护细致风险管理协议的必要性。本报告概述了OpenAI o1和OpenAI o1-mini模型的安全工作,包括安全评估、外部红队测试和准备框架评估。

🔬 方法详解

问题定义:现有的大语言模型在面对安全性挑战时,往往缺乏足够的推理能力来理解和应用复杂的安全策略。这导致模型容易生成有害、不准确或带有偏见的回复,甚至可能被恶意用户利用进行“越狱”攻击。现有方法难以有效应对这些问题,需要更强大的推理和对齐机制。

核心思路:本研究的核心思路是利用大规模强化学习训练模型,并引入“思维链”(Chain of Thought, CoT)推理机制。CoT允许模型在生成最终答案之前,先进行一系列的中间推理步骤,从而更好地理解问题、应用知识和遵循安全策略。通过这种方式,模型能够更审慎地进行决策,减少不安全输出的风险。

技术框架:o1系列模型的训练流程主要包括以下几个阶段:1) 预训练:使用大规模文本数据进行预训练,使模型具备基本的语言理解和生成能力。2) 强化学习:使用强化学习算法(具体算法未知)对模型进行微调,以优化其在特定任务上的表现,例如安全性对齐。3) 思维链推理:在推理过程中,模型首先生成一系列中间推理步骤,然后基于这些步骤生成最终答案。这个过程模仿了人类的思考方式,有助于提高模型的推理能力和决策质量。4) 安全评估与红队测试:对模型进行全面的安全评估和红队测试,以发现潜在的安全漏洞和风险。

关键创新:该研究的关键创新在于将大规模强化学习与思维链推理相结合,从而显著提升了模型的安全性和鲁棒性。通过CoT,模型能够更好地理解和应用安全策略,减少不安全输出的风险。此外,该研究还强调了安全评估和红队测试的重要性,并将其纳入模型的开发流程中。

关键设计:具体的参数设置、损失函数和网络结构等技术细节在论文中没有详细描述,属于OpenAI的商业机密。但可以推测,强化学习的奖励函数设计对于模型的安全性对齐至关重要。此外,CoT的实现方式(例如,如何生成中间推理步骤)也是一个关键的设计选择。这些细节的优化对于模型的最终性能具有重要影响。

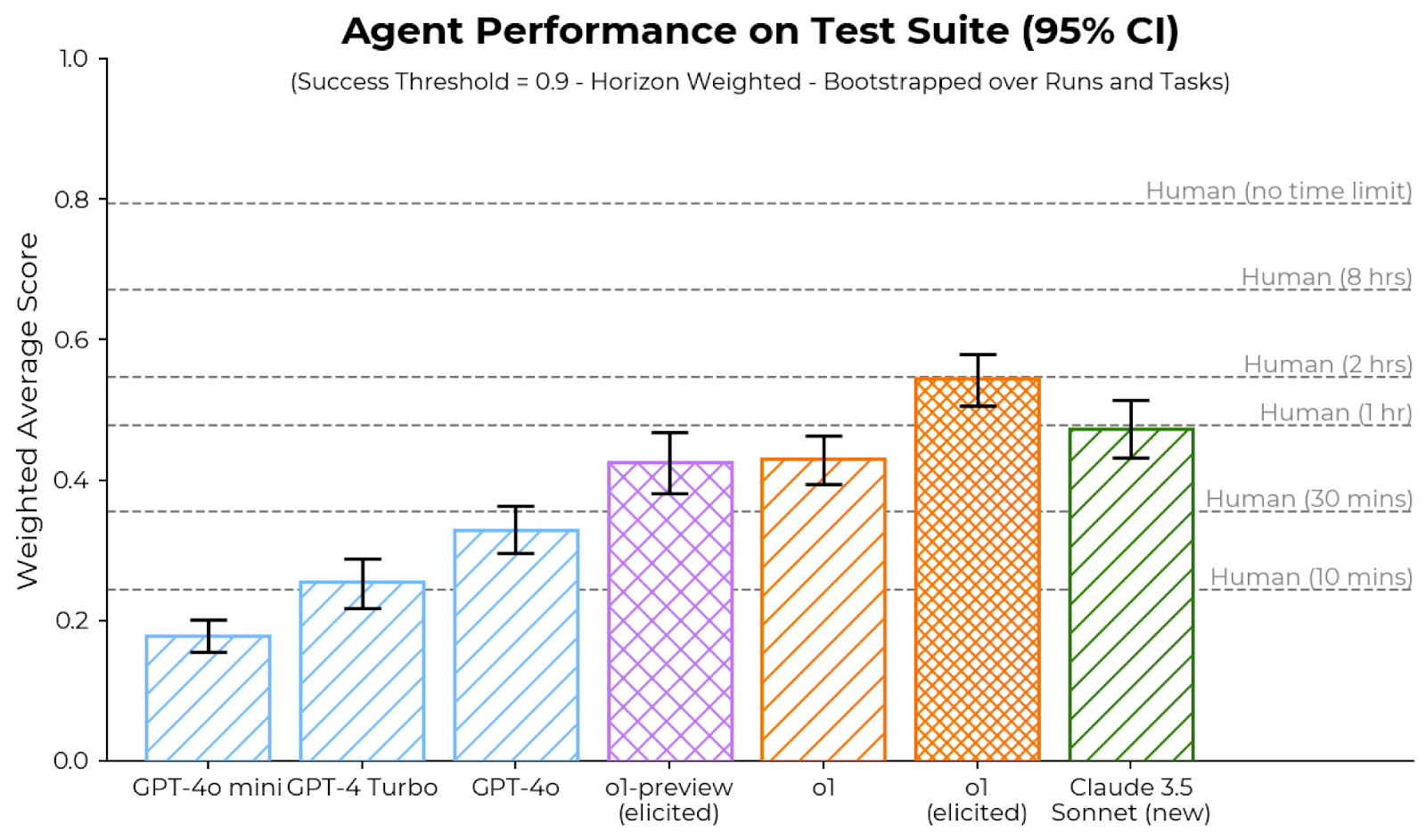

🖼️ 关键图片

📊 实验亮点

o1模型系列在安全性基准测试中取得了最先进的性能,尤其是在防范非法建议、刻板印象和越狱攻击等方面表现出色。这表明,通过大规模强化学习和思维链推理,可以显著提升模型的安全性和鲁棒性。具体的性能数据和提升幅度在论文中未给出,但强调了其state-of-the-art的地位。

🎯 应用场景

该研究成果可广泛应用于各种需要高安全性和可靠性的自然语言处理应用场景,例如智能客服、内容审核、风险评估等。通过提升模型的推理能力和安全性,可以有效减少有害信息传播、保护用户隐私、防范恶意攻击,从而构建更加安全可信的人工智能系统。

📄 摘要(原文)

The o1 model series is trained with large-scale reinforcement learning to reason using chain of thought. These advanced reasoning capabilities provide new avenues for improving the safety and robustness of our models. In particular, our models can reason about our safety policies in context when responding to potentially unsafe prompts, through deliberative alignment. This leads to state-of-the-art performance on certain benchmarks for risks such as generating illicit advice, choosing stereotyped responses, and succumbing to known jailbreaks. Training models to incorporate a chain of thought before answering has the potential to unlock substantial benefits, while also increasing potential risks that stem from heightened intelligence. Our results underscore the need for building robust alignment methods, extensively stress-testing their efficacy, and maintaining meticulous risk management protocols. This report outlines the safety work carried out for the OpenAI o1 and OpenAI o1-mini models, including safety evaluations, external red teaming, and Preparedness Framework evaluations.