SWE-Search: Enhancing Software Agents with Monte Carlo Tree Search and Iterative Refinement

作者: Antonis Antoniades, Albert Örwall, Kexun Zhang, Yuxi Xie, Anirudh Goyal, William Wang

分类: cs.AI

发布日期: 2024-10-26 (更新: 2025-04-02)

备注: Main body: 10 pages, 5 figures. Appendix: 5 pages, 4 figures. Open-source codebase

💡 一句话要点

提出SWE-Search以解决软件代理在动态环境中的适应性问题

🎯 匹配领域: 支柱九:具身大模型 (Embodied Foundation Models)

关键词: 蒙特卡罗树搜索 自我改进 软件代理 动态环境 迭代学习 性能提升 多代理系统

📋 核心要点

- 现有基于LLM的软件代理在动态环境中缺乏灵活性,无法有效回溯和探索替代解决方案。

- 本文提出的SWE-Search框架结合MCTS与自我改进机制,允许软件代理在执行任务时进行迭代学习和策略优化。

- 在SWE-bench基准测试中,SWE-Search相较于传统开源代理实现了23%的性能提升,展示了其有效性。

📝 摘要(中文)

软件工程师在复杂动态环境中必须不断适应变化的需求,基于经验进行迭代学习,并根据新见解重新考虑其方法。然而,当前基于大型语言模型(LLM)的软件代理往往遵循线性、顺序的流程,限制了其在初始方法无效时的回溯和探索能力。为了解决这些挑战,本文提出了SWE-Search,一个将蒙特卡罗树搜索(MCTS)与自我改进机制相结合的多代理框架,以提升软件代理在代码库级别任务上的表现。SWE-Search通过引入混合价值函数,利用LLM进行数值估计和定性评估,形成自我反馈循环,使代理能够基于定量和定性评估迭代优化策略。应用于SWE-bench基准测试,我们的方法在五个模型上相较于标准开源代理实现了23%的相对性能提升。

🔬 方法详解

问题定义:本文旨在解决现有软件代理在复杂动态环境中适应性不足的问题,尤其是其线性处理流程限制了回溯和探索能力。

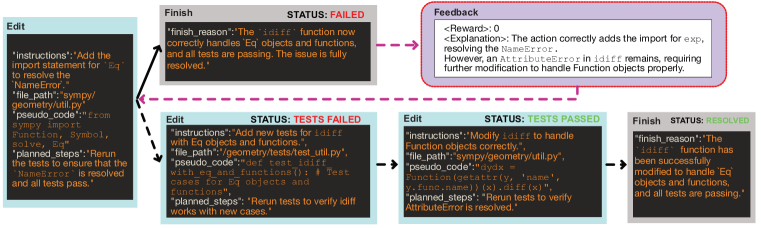

核心思路:SWE-Search框架通过结合MCTS与自我反馈机制,允许代理在执行任务时进行迭代学习,从而提升其策略的灵活性和有效性。

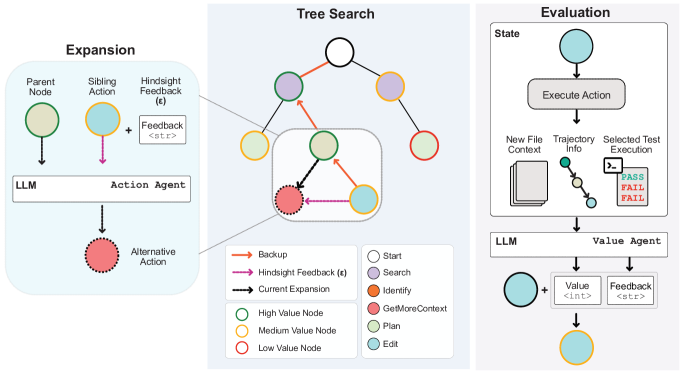

技术框架:该框架包括三个主要模块:SWE-Agent用于自适应探索,Value Agent提供迭代反馈,Discriminator Agent促进多代理之间的协作决策。整体流程通过自我反馈循环实现策略优化。

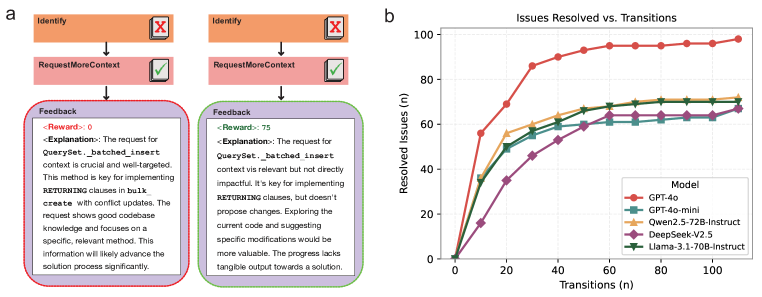

关键创新:SWE-Search的核心创新在于引入混合价值函数,利用LLM进行数值和定性评估,形成自我反馈机制,与传统MCTS方法相比,显著提升了代理的决策能力。

关键设计:在设计中,采用了特定的参数设置以优化搜索深度,损失函数结合了数值和定性评估,确保了代理在复杂任务中的有效学习与适应。

🖼️ 关键图片

📊 实验亮点

实验结果表明,SWE-Search在SWE-bench基准测试中实现了23%的相对性能提升,相较于标准开源代理,展示了其在复杂任务中的优势。此外,性能随着推理时间计算的增加而提升,提供了改进软件代理的新路径。

🎯 应用场景

该研究的潜在应用领域包括软件开发、自动化测试和智能决策支持系统。通过提升软件代理的适应性和决策能力,SWE-Search能够帮助工程师更高效地应对复杂的开发环境,未来可能在多种软件工程任务中发挥重要作用。

📄 摘要(原文)

Software engineers operating in complex and dynamic environments must continuously adapt to evolving requirements, learn iteratively from experience, and reconsider their approaches based on new insights. However, current large language model (LLM)-based software agents often follow linear, sequential processes that prevent backtracking and exploration of alternative solutions, limiting their ability to rethink their strategies when initial approaches prove ineffective. To address these challenges, we propose SWE-Search, a multi-agent framework that integrates Monte Carlo Tree Search (MCTS) with a self-improvement mechanism to enhance software agents' performance on repository-level software tasks. SWE-Search extends traditional MCTS by incorporating a hybrid value function that leverages LLMs for both numerical value estimation and qualitative evaluation. This enables self-feedback loops where agents iteratively refine their strategies based on both quantitative numerical evaluations and qualitative natural language assessments of pursued trajectories. The framework includes a SWE-Agent for adaptive exploration, a Value Agent for iterative feedback, and a Discriminator Agent that facilitates multi-agent debate for collaborative decision-making. Applied to the SWE-bench benchmark, our approach demonstrates a 23% relative improvement in performance across five models compared to standard open-source agents without MCTS. Our analysis reveals how performance scales with increased inference-time compute through deeper search, providing a pathway to improve software agents without requiring larger models or additional training data. This highlights the potential of self-evaluation driven search techniques in complex software engineering environments.